In this NetApp training tutorial, I will cover NetApp NFS storage. Scroll down for the video and also text tutorial.

NetApp NFS Video Tutorial

Rodney Banipal

I am happy to say that I have passed the NetApp NCDA exam. Your video course was very informative to understand how NetApp works. I have practiced a lot in my lab and that led me to get a NetApp job. Without your course I would not be here so a big thank you goes to you. I could not have done it without your course.

Protocol Licensing

Although they will typically come as a bundle, each of NAS and SAN protocols are licensed separately. You will not be able to enable NFS when creating a Storage Virtual Machine (SVM) if the NFS license is not installed. If the checkbox to enable NFS is not visible in the System Manager GUI it is because you haven’t licensed it yet.

NetApp NAS Implementation

NetApp NFS and CIFS are configured on a per SVM basis, and both are configured independently of each other. If you are using both protocols, they can be enabled on the same SVM or you can have separate SVMs for each. You can also have two different NFS or CIFS SVMs with different settings since each SVM operates as a separate system and appears as a separate storage system to your clients.

NFS Versions

NFS was invented by Sun Microsystems and is the standard NAS protocol for UNIX and Linux clients. Though it was originally designed for UNIX, it can be accessed by other operating systems as well. It is commonly used for VMware ESXi datastores.

Here are the different versions of NFS:

- NFSv1 – this was not released and was only used for internal testing in Sun.

- NFSv2 – standardized in 1989. A stateless protocol that supports UDP and 32-bit files only, with a maximum file size of 2GB. NetApp don’t support this protocol.

- NFSv3 – standardized in 1995. A stateless protocol with performance enhancements over version 2. It supports TCP and 64-bit files with a larger maximum file size of 16TB. It supports local user ID, group ID and Kerberos to authenticate users. NFSv3 is enabled by default on NetApp ONTAP systems when NFS is used.

- NFSv4 – standardized in 2003. A stateful protocol with security enhancements from version 3. It also has a maximum file size of 16TB. It supports Windows-style ACLs and end-to-end security and is disabled by default on NetApp ONTAP.

- NFSv4.1 - an extended version of NFSv4. Adds sessions, directory delegations, and parallel NFS (pNFS) to provide scalability and performance improvements on clustered storage systems. It has no dependencies on NFSv4. Like v4, v4.1 is also disabled by default on NetApp ONTAP.

NFS Referrals

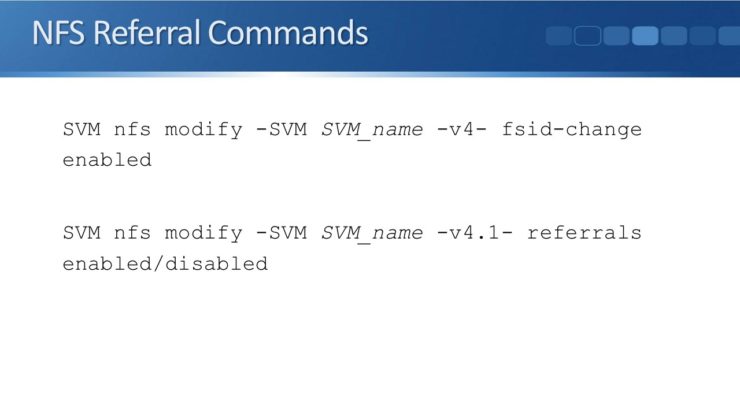

Referrals were released in NFSv4 and are also supported in v4.1. They provide an enhancement for clustered storage systems. When they are enabled in NetApp ONTAP, if a node receives an NFS request for data on a volume on another node, it will refer the client to use a Logical Interface (LIF) on the node which hosts the volume. This prevents unnecessary traffic going over the cluster interconnect.

Support for NFS referrals is not uniformly available on all NFSv4 clients. Do not enable it on an SVM unless all your clients support it.

NetApp NFS Referral Commands

NFS v4.1 pNFS (Parallel NFS)

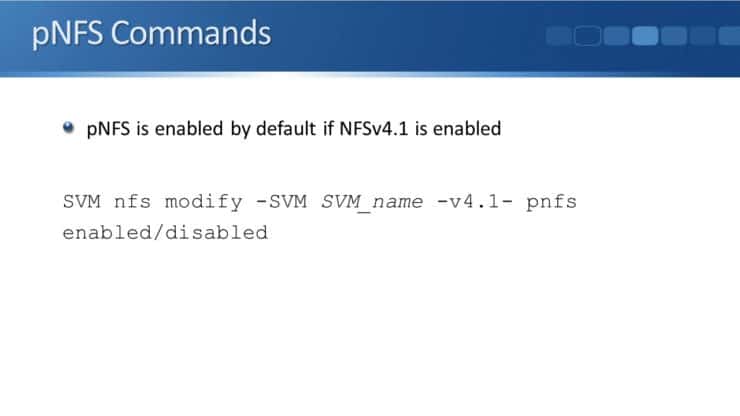

Parallel NFS (pNFS) became available with NFSv4.1. Similar to referrals, pNFS can also direct a client to use a LIF on the local node that hosts the volume. If an administrator moves the volume to another node, pNFS will redirect the client with no need to remount. This is an advantage over NFS referrals, where if the volume moves the client is not updated until it unmounts and remounts the file system.

Let say we would like to move a volume to higher or lower performance disks or to rebalance capacity more evenly across our aggregates. Using NFS referrals, when the client first connects it will be referred to use a local LIF on the same node where the volume is. But if the volume then moves to a different node, the client will not be updated. pNFS will tell a client which node to use when it initially connects, and will also update the client if the volume moves after that.

NetApp NFS - pNFS Commands

NFS referrals and pNFS are mutually exclusive, you can only enable one or the other on an SVM. pNFS is enabled by default if NFSv4.1 is enabled. To enable or disable it:

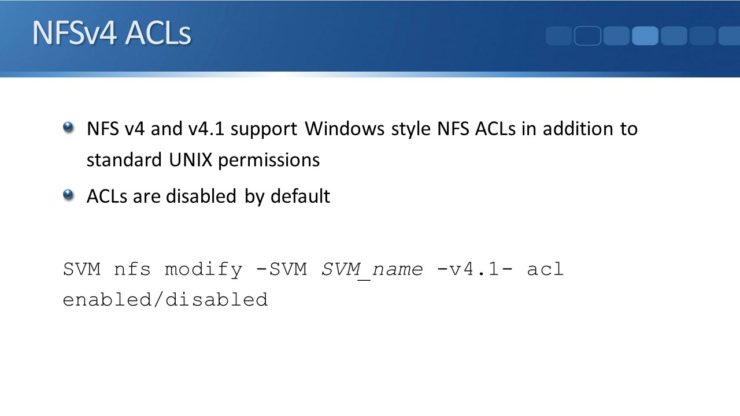

NFSv4 ACLs

In addition to the standard UNIX permissions, NFSv4 and v4.1 support Windows-style NFS ACLs. This gives more flexibility for configuring permissions for users and groups. ACLs are disabled by default. To turn them on:

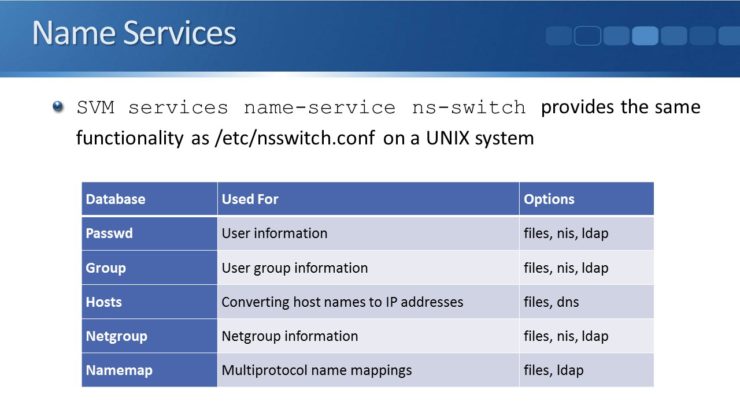

Name Services

End host clients must be properly authenticated before they can access data on the NetApp system. When an NFS client connects, the UNIX credentials for the user can be checked against different name services. The credentials can be checked against local user accounts (type ‘file’), an NIS domain, and/or LDAP domain (Kerberos is supported). At least one name service must be configured to successfully authentic the user. Each SVM acts as a separate storage system and can use different settings.

The ONTAP system cannot act as an NIS, LDAP, or Kerberos server itself. For those services you need to configure an external server.

You can specify multiple name services and the order in which ONTAP searches them. You could for example check LDAP first, and then if LDAP does not give a result or is not available, fall back to local file type.

The command to configure name services is:

SVM services name-service ns-switch

It provides the same functionality as the /etc/nsswitch.conf command on a UNIX system.

- Password – for user information. You can use files, the local file, NIS, or LDAP.

- Group – user group information. You can use files, NIS, or LDAP.

- Host – converts hostnames to IP addresses. You can use files or DNS.

- Netgroup – for netgroup information. You can use files, NIS, or LDAP.

- Namemap – for multiprotocol name-mappings. You can use files or LDAP.

If you use type ‘files’ local UNIX accounts then the SVM trusts the end host to authenticate the client. It will accept the UID that the client sends, and trust that the client has authenticated that user. If the client matches an export policy rule on the SVM, and sends a UID which is permitted in the SVM, then they can access the directories and files. This is obviously a security risk, because it's easy for somebody on a client to send whatever UID they want. So rather than using local UNIX accounts, it's preferred to use LDAP with Kerberos authentication.

Additional Resources

NetApp Setting up file access using NFS

How ONTAP supports file access using NFS

Want to practice NetApp NFS on your laptop? Download my free step-by-step guide 'How to Build a NetApp ONTAP Lab for Free'

Click Here to get my 'NetApp ONTAP 9 Storage Complete' training course.