In this NetApp training tutorial, I will cover NetApp Storage Pools. Scroll down for the video and also text tutorial.

NetApp Storage Pools (Flash Pool SSD) Tutorial Video Tutorial

Randy Johnson

The concepts, explanations, and applications to real world scenarios are exceptional. The hands-on labs reinforce the learning, and are actually better than the vendors’ labs.

I always feel fully engaged and fully oriented to the teaching. There has never been a time when I was scratching my head or not understanding the explanations or examples. And I can take the labs and examples and apply them directly in real environments.

NetApp Storage Pools for Flash Pool SSD

This is a feature that came out in ONTAP version 8.3 which makes more efficient use of the disk capacity of your SSD disks in Flash Pool aggregates. Flash Pool utilises a cache of SSD disks in front of your higher capacity spinning SAS or SATA drives to provide cost efficient improved performance for your recently accessed data.

Advanced Disk Partitioning (ADP) also became available in ONTAP 8.3 and it's a fairly similar feature. Where ADP is used to get more efficiency capacity use from the disks that are used for system information, storage pools are used to get more flexible use of the SSD capacity that is used in Flash Pool aggregates.

Let’s take a look at the traditional way to configure NetApp Flash Pool before storage pools were available. Say that we have three different Flash Pool aggregates. Aggregate_1 and Aggregate_2 on Node one and Aggregate_3 on Node two. In that case I would need separate physical SSDs dedicated for each of those aggregates. I would have SSDs owned by node one for Aggregate_1, separate SSDs owned by node one for Aggregate_2, and another set of SSDs on node two for Aggregate_3.

The difference with SSD storage pools is that the disks are partitioned, so rather than a physical disk being tied to an individual Flash Pool aggregate, we can allocate slices from the same physical disks to different aggregates.

When an SSD storage pool is created the disks are partitioned into four allocation units which can be used in any Flash Pool aggregate on the node which owns the disks or its High Availability peer.

Note that they can’t be used in any node throughout the cluster, just the HA pair. If you had an eight node cluster and you wanted to use storage pools for Flash Pool aggregates on every node in the cluster, you would need four storage pools.

The allocation units can be assigned as separate SSD RAID groups within a single Flash Pool aggregate, or they can be split across different Flash Pool aggregates.

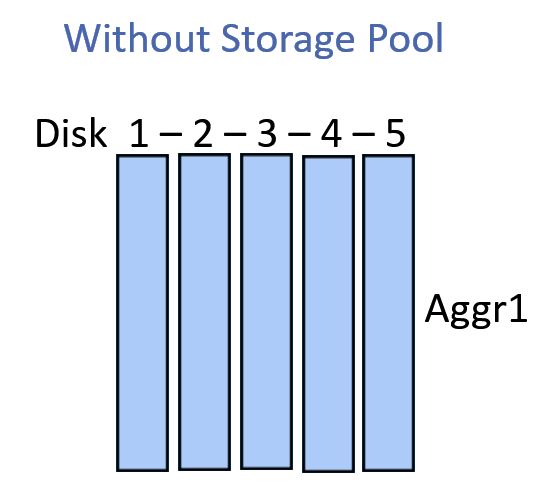

Flash Pool without Storage Pool

The image below shows the traditional way to configure Flash Pool without using a NetApp Storage Pool.

We've got five physical SSDs which we could use for the Flash Pool cache for Aggregate_1. If we wanted to have Flash Pool cache for Aggregate_2 and Aggregate_3 we would have to add separate physical SSDs.

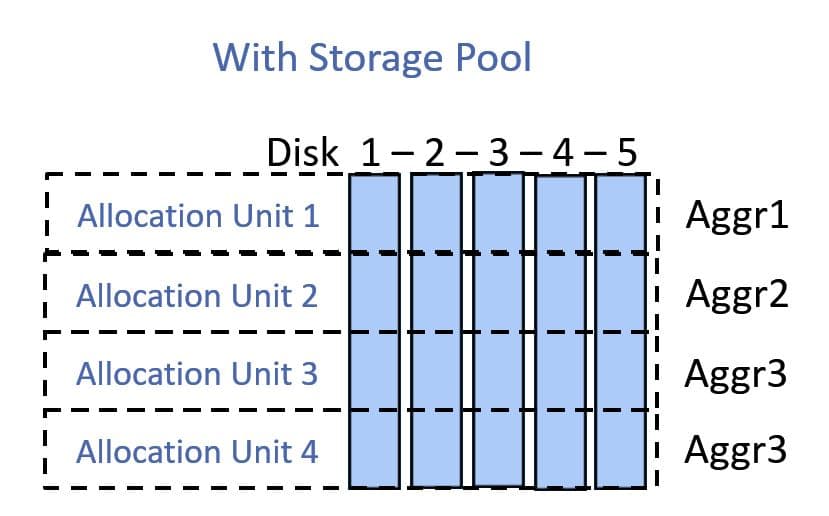

Flash Pool with NetApp Storage Pool

The diagram below shows the storage pool configuration.

We take those same five physical SSDs but now we split them into the four different allocation units. We can use allocation units one and two for Aggregate_1 and Aggregate_2. Allocation units three and four can be configured as two separate RAID groups both for Aggregate_3.

A storage pool can contain from 2 to 28 SSD disks. By default two allocation units are assigned to each node in the HA pair. The first node will get allocation units one and two, the second node will get allocation units three and four by default.

Allocation units can be reassigned between controllers on the same HA pair. If you wanted you could have all four of the allocation units assigned to one node.

The main benefit we get from storage pools is the increased efficiency with our storage capacity, which means we can buy less disks. It leads to a larger failure domain however so you need to weigh up that trade-off. With the traditional setup a failed disk will only impact one aggregate, but if it’s a member of a storage pool it can affect many.

Additional Resources

How Flash Pool SSD partitioning increases cache allocation flexibility for Flash Pool aggregates includes links to configuration examples.

Click Here to get my 'NetApp ONTAP 9 Storage Complete' training course.