This is part of my ‘Practical Introduction to Cloud Computing’ course. Click here to enrol in the complete course for free!

In this cloud training tutorial, we’ll continue with the essential characteristics of cloud as defined by the NIST. Scroll down for the video and also text tutorial.

Cloud Resource Pooling Video Tutorial

Cloud Resource Pooling

This is the NIST definition of cloud resource pooling: ‘The provider’s computing resources are pooled to serve multiple consumers using a multi-tenant model, with different physical and virtual resources dynamically assigned and reassigned according to consumer demand. There is a sense of location independence in that the customer generally has no control or knowledge over the exact location of the provided resources but may be able to specify location at a higher level of abstraction (e.g., country, state, or datacenter). Examples of resources include storage, processing, memory, and network bandwidth.’

Processor and Memory Pooling

Let’s have a look at this in some more detail. The first thing to talk about is that we can pool the CPU and memory resources of the underlying physical servers that we’re running virtual machines on.

Let’s go back to our hypervisor lab demo for this. I’m back here in the management GUI of my VMware lab, and this is similar to the kind of software that cloud providers use to manage their hosts and virtual machines. Maybe they’re also using VMware or maybe they’re using some other vendor’s hypervisor like Citrix Xenserver. I’m using VMware for the example here, and you can see I’ve got two hosts in my lab, 10.2.1.11 and 10.2.1.12.

The physical host 10.2.1.11 has got two processor sockets (two physical CPU’s) with two cores per CPU, and 2 GB RAM. A real world cloud server provider would be using much more powerful hosts than I have in my lab demonstration here.

The CPU and memory resources on the host can be divided up amongst the virtual machines running on it. I can see I’ve got three virtual machines on here, ‘Open Filer 1’, ‘Nostalgia 2’, and ‘XP 1’. Again these are just low powered virtual machines for my lab demonstration.

If I click on OpenFiler1, I can see that it is running with four virtual CPU’s and a little over 300 MB of memory. It has 100 GB of storage space. That’s provisioned on my data store, which is located on my external SAN storage.

Nostalgia2 is a fairly small virtual machine that can be used to run old DOS games. It’s got one virtual CPU and 32 MB of memory.

The virtual machines share access to the CPU and memory on the underlying physical host. It’s the job of the hypervisor to make sure that the virtual machines get their fair share of those resources.

With cloud computing we’ve got the concept of tenants. A tenant is a different customer, so customer A would be one tenant, customer B would be a different tenant. When we have multiple customers using the same underlying infrastructure, it’s a multi-tenant system.

We’re very often going to have different virtual machines for different customers running on the same physical server. The cloud provider will make sure that they don’t put too many virtual machines on any single server so they can all get good levels of performance. The virtual machines are kept completely separate and secure from each other.

Storage Pooling

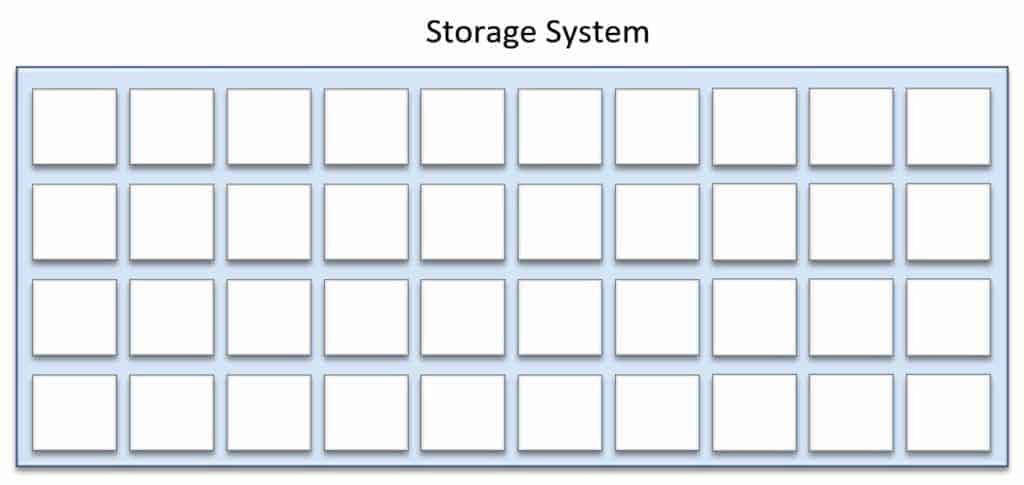

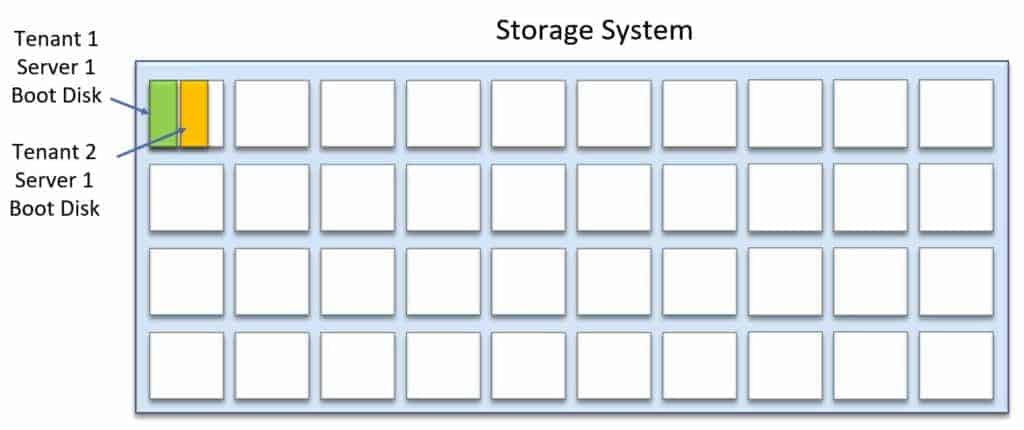

The next resource we’re going to look at that we can pool is the storage. In the diagram below the big blue box represents a storage system with many hard drives. The hard drives are represented by each of the smaller white squares.

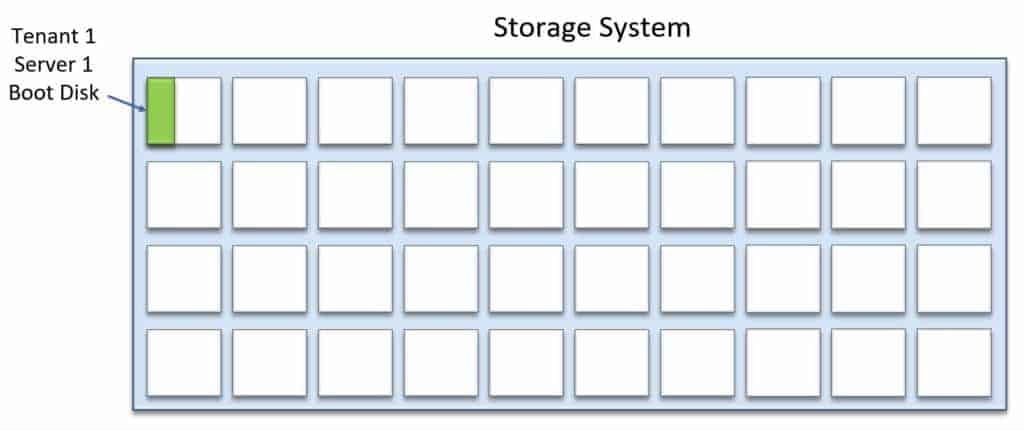

With my centralised storage I can slice up my storage however I want to, and give the virtual machines their own small part of that storage for however much space they require. In the example below, I take a slice of the first disk and allocate that as the boot disk for ‘Tenant 1, Server 1’.

I take another slice of my storage and provision that as the boot disk for ‘Tenant 2, Server 1’.

Shared centralised storage makes storage allocation really efficient – rather than having to give whole disks to different servers I can just give them exactly how much storage they require. Further savings can be made through storage efficiency techniques such as thin provisioning, deduplication and compression.

Check out my Introduction to SAN and NAS Storage course to find out more about centralised storage.

Network Infrastructure Pooling

Moving on, the next resource that can be pooled is the network infrastructure.

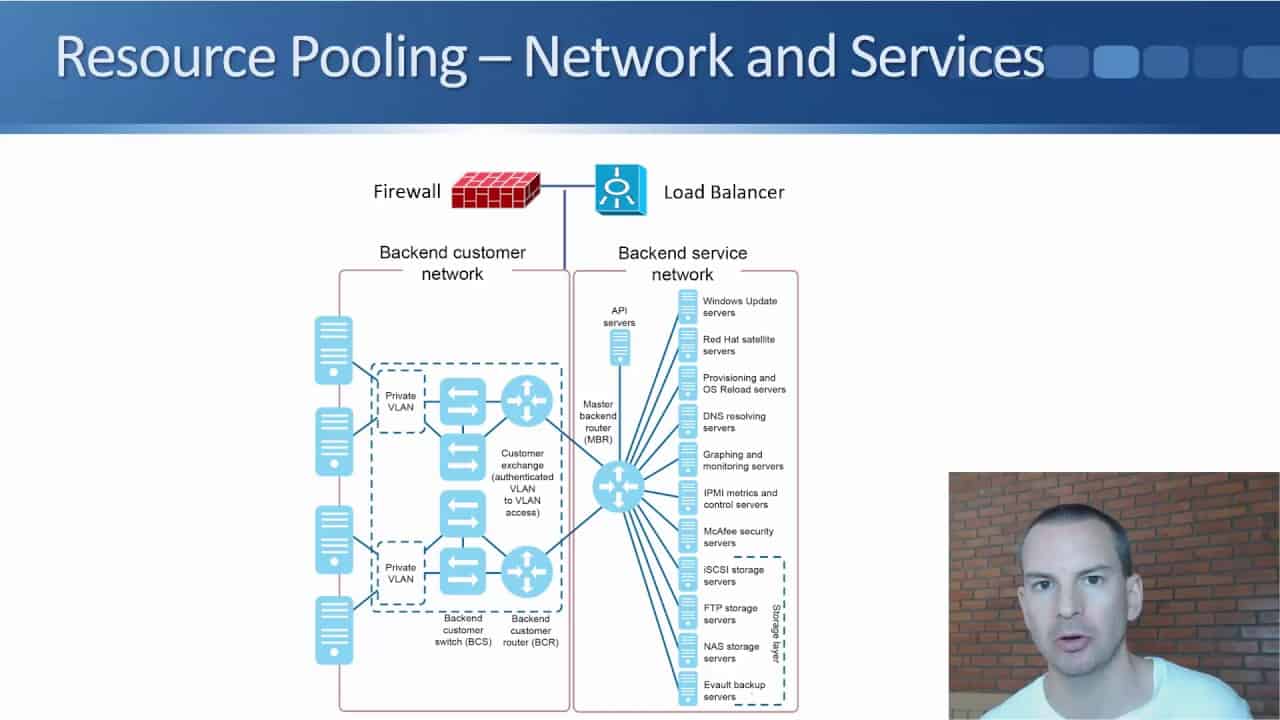

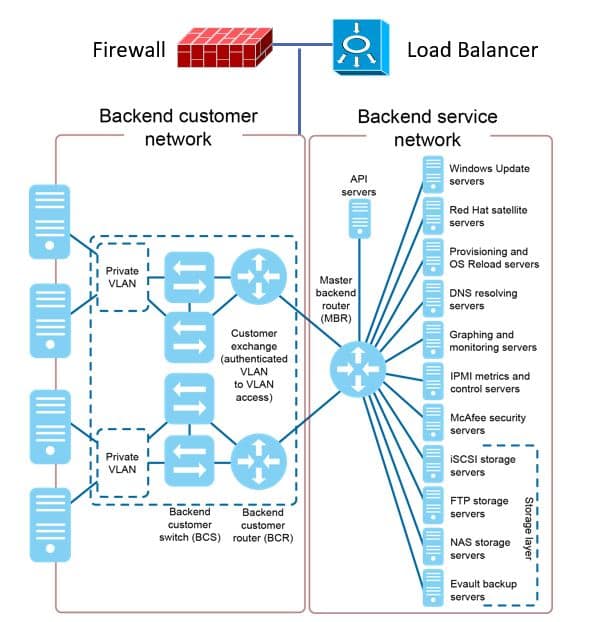

There is a physical firewall at the top of the diagram below.

All of the different tenants are going to have firewall rules controlling what traffic is allowed to come in to their virtual machines, such as RDP for management and HTTP traffic on port 80 if it’s a web server.

We don’t need to give every single customer their own physical firewall, we can share the same physical firewall between different customers. Load balancers for incoming connections can also be virtualized and shared between multiple customers.

In the main section on the left of the diagram you can see there are multiple switchers and routers. Those switches and routers are shared, with traffic for different customers going through the same devices.

Service Pooling

The cloud provider is also providing various services to the customers, as shown on the right hand side of the diagram. There are Windows Update and Red Hat update servers for operating system patching, DNS etc. Having DNS as a centralised service saves the customers from having to provide their own DNS solution.

Location Independence

As stated by the NIST, the customer generally has no knowledge or control over the exact location of the provided resources but they may be able to specify location at a higher level of abstraction, such as at the country, state, or data center level.

Let’s use AWS for the example again, when I spun up a virtual machine I did it in the Singapore data centre because I’m based in the South East Asia region. By having it close to me I’m going to get the lowest network latency and the best performance.

With AWS I know the data centre that my virtual machine is in, but not the actual physical server it’s running on. It could be anywhere in that particular data centre. It could be using any of the individual storage systems in the data center, and any of the individual firewalls. Those specifics don’t matter to the customer.

Resource Pooling Summary

Using shared equipment rather than dedicating separate hardware to each customer means that the cloud provider needs less equipment in their data centers. They get economies of scale, better efficiency and cost savings which can be passed on to the customer. This makes it a more viable solution from the financial point of view.

Additional Resources

Resource Pooling from Technopedia.