In this NetApp tutorial, you’ll learn about Storage Capability Profiles (SCP) in the NetApp Virtual Storage Console (VSC). What Storage Capability Profiles allow you to do is specify the characteristics of the storage. Scroll down for the video and also the text tutorials.

For example, you can specify whether it’s going to use thick or thin provisioning if you want deduplication and compression enabled, and also the QoS performance characteristics of that storage as well.

NetApp VSC Storage Capability Profiles Video Tutorial

Glenn Reed

My company sent me to the one week official NetApp class but in my opinion this is better, he covers much more detail than they covered in the official class. I took this class to prepare for the NetApp certification which I just obtained on Friday.

Once you’ve got your profile created, you can then use this. When you provision a data store using VSC, you can specify the profile that you want to use, and the storage will be configured with those characteristics. Also, after you’ve done that, when you provision a virtual machine, you can specify a profile there as well, and then the virtual machine will be deployed with that profile for its storage.

For example, maybe you’ve got one virtual machine that you want to have high performance and another virtual machine that only requires low performance. It makes it very easy to provision your virtual machines with the correct storage characteristics by using these profiles.

When you do use them, you have everything set up ahead of time, and then when you provision your virtual machines, you just select it from a dropdown. That gives you consistency and standardization, much less likely that you’re going to run into any issues and have to troubleshoot later, even if you were configuring this on a case by case, virtual machine by virtual machine basis.

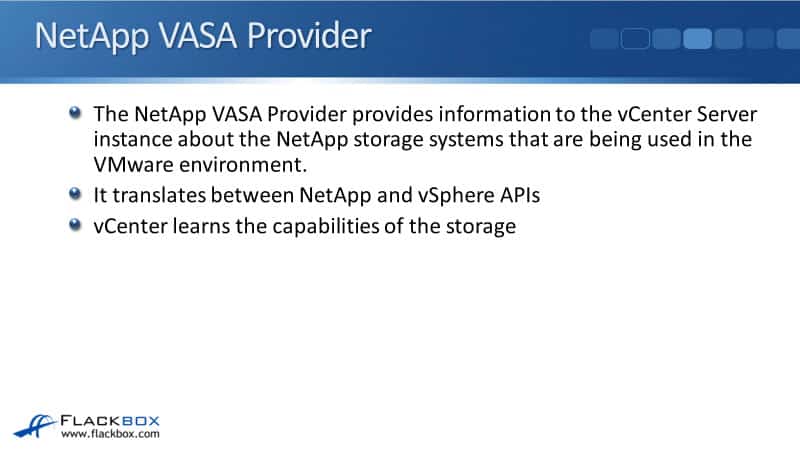

NetApp VASA Provider

The first thing is a reminder that the NetApp VASA provider provides information to the vCenter server instance about the NetApp storage systems that are being used in the VMware environment. The VASA provider translates between NetApp and vSphere APIs, and also, vCenter learns the capabilities of the storage via the VASA provider.

When you’re configuring your Storage Capability Profiles, that’s where VSC, which is on the vCenter server, knows the capability of the storage. It’s going to get that information from the VASA provider. So for your SCP to be available to you, you need to make sure that the VASA provider is enabled, which is by default in the latest versions of VSC.

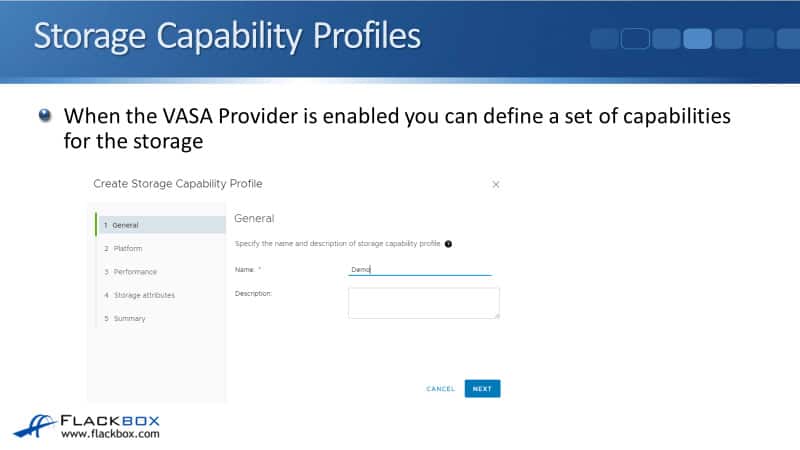

Storage Capability Profiles

When the VASA provider is enabled, you can define a set of capabilities for the storage. To get to this page, you go to the VSC plugin in your vSphere client. There’s a page there for Storage Capability Profiles. You can see in the screenshot here that I’m creating a new storage capability profile. I’ve said I’m creating a new one. I’ve just given it a name here, and then I would click ‘Next’.

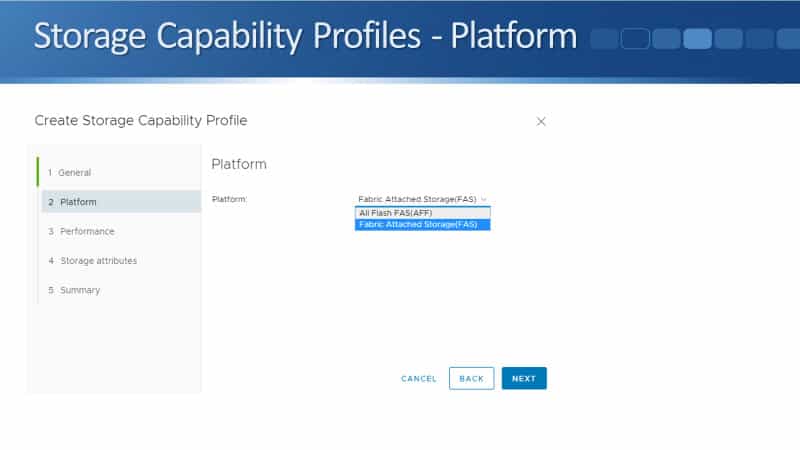

Storage Capability Profiles - Platform

On the next page, you would specify the platform. As we go through the different pages, you’re specifying the characteristics or the capabilities of the storage. On the platform page, specify whether this is AFF or a FAS system.

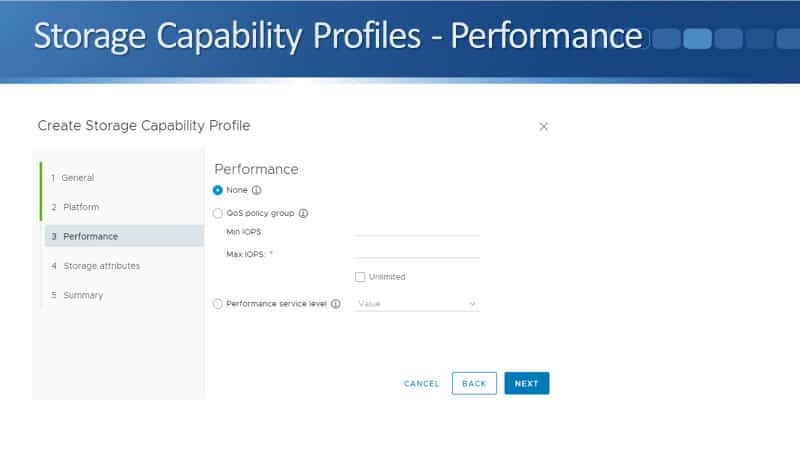

Storage Capability Profiles – Performance

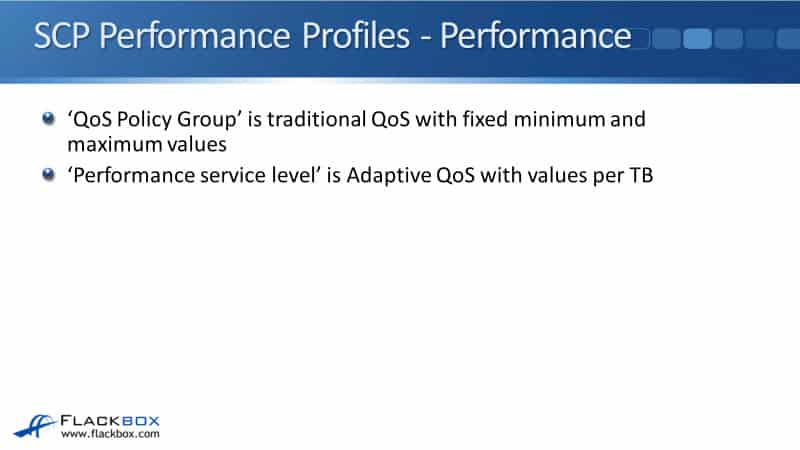

The next page is performance. The options we’ve got here are None, QoS policy group, and performance service level. The QoS policy group is traditional QoS with fixed minimum and maximum values. Performance service level is adaptive QoS with values per terabyte (TB).

Again, QoS policy group, that is the traditional QoS with fixed minimum and maximum value. Performance service level is adaptive QoS.

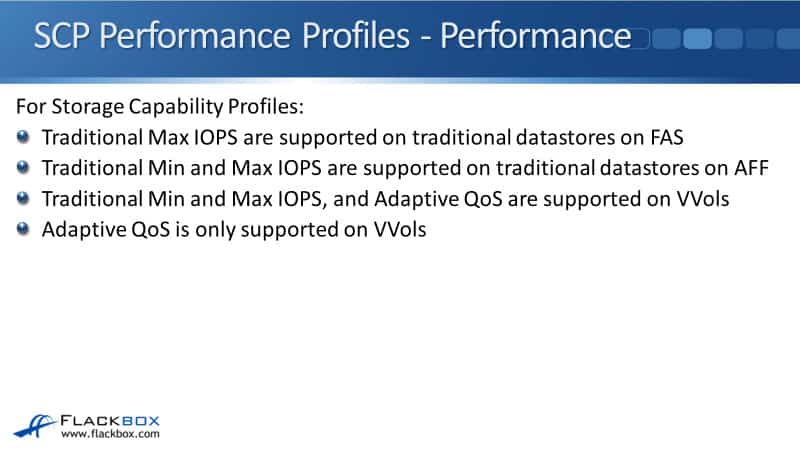

For your Storage Capability Profiles, traditional Max IOPS are supported on traditional datastores on FAS. Traditional Min and Max IOPS are supported on traditional datastores on AFF. It’s not supported on FAS systems. You can only do Max IOPS on FAS.

Traditional Min and Max IOPS and adaptive QoS are supported on VVols. Adaptive QoS is only supported on VVols. It’s not supported on traditional datastores. Those are the caveats and the limitations there.

When applied on a traditional datastore, Min and Max IOPS applies at the volume level. It’s going to be applied to all the virtual machines that are in that particular volume. When applied for a VVol datastore, Min and Max IOPS applies to each data VVol, which is basically the VMDK files of a virtual disk for the virtual machine.

Basically, what VVols do is give you more granular management of the storage characteristics that are going to be applied to your virtual machines. Rather than everything being applied at the volume or the LUN level, if you’re using a SAN protocol, you can actually get granular and have different settings at the virtual machine and the virtual machine’s virtual disk level.

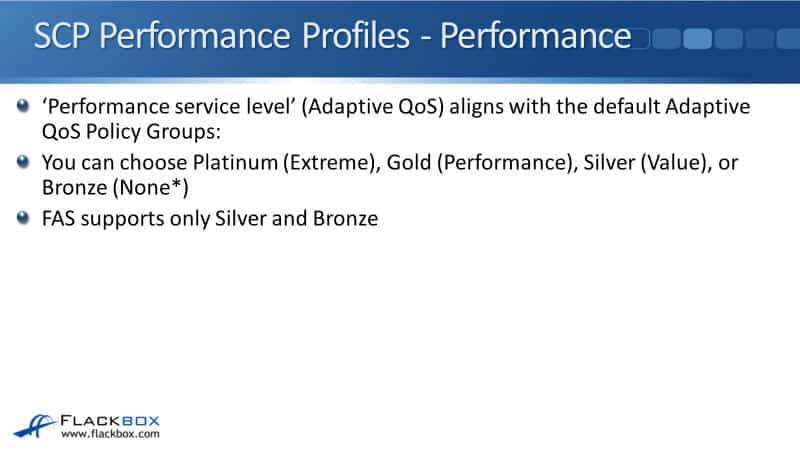

Suppose we do choose the performance service level, which configures adaptive QoS, that aligns with the default adaptive QoS policy groups. You can choose the Platinum SCP, which uses the extreme adaptive QoS level. You can choose the Gold SCP, which uses the performance level of adaptive QoS. Silver is the value level, and you can also select an SCP of Bronze, which is none.

FAS systems only support Silver and Bronze. AFF support all four of the different levels. When you create a Storage Capability Profiles, your options there are Platinum, Gold, Silver, or Bronze. For the QoS level, Platinum means it gets extreme adaptive QoS, and Gold means it’s performance adaptive QoS, Silver is value, and Bronze is value.

Default Adaptive QoS Policy Groups

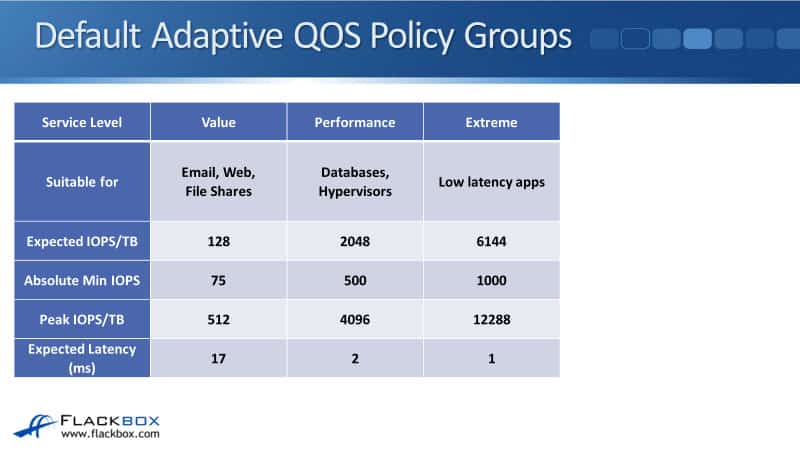

You can see the different characteristics of the different adaptive QoS policy groups here. With extreme, that gives expected IOPS per terabyte of 6144, performance is 2048, and value is 128. Extreme is suitable for low latency applications. Performance is suitable for databases and hypervisors. Value is suitable for email, web file shares, etc.

You can see the expected latency like the ballpark figure that would be expected if you apply value, performance, or extreme. With traditional QoS, it sets an absolute minimum and maximum value for the volume, and that’s applied no matter how much space the actual volume takes up, the capacity of it.

With adaptive QoS, it changes based on the size of the volume. These figures are per terabyte based on the capacity of the volume. If you’re using adaptive QoS with VVols, then all VVols should have an adaptive QoS policy applied. This is because balanced placement is used for provisioning.

When you’ve got a VVols datastore, it can be made up of multiple different volumes. When you provision a virtual machine into that VVols datastore, balanced placement is going to decide which volume in that datastore to place the VVols for this virtual machine. And balanced placement can make a better decision about which volume to use when it has full information about all existing VVols.

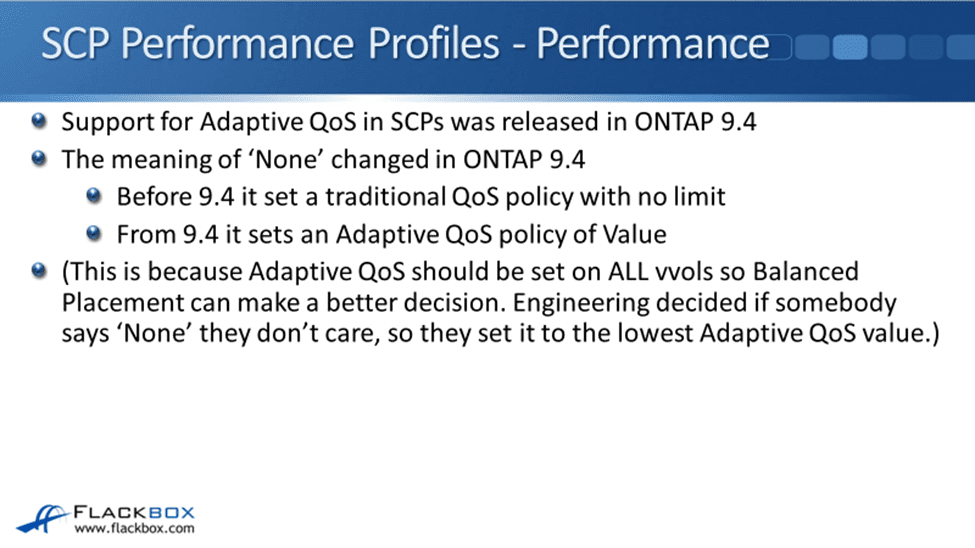

Support for adaptive QoS in the Storage Capability Profiles was released in ONTAP 9.4. Adaptive QoS has been around for longer than that, but for SCPs that came out in 9.4. Before 9.4, there was just traditional QoS available. The meaning of ‘None’ on the performance page changed in ONTAP 9.4. Before 9.4, it set a traditional QoS policy with no limit. From 9.4, it sets an adaptive QoS policy of value.

Back on the performance page, you see that you select one of the three values. You have to choose one of them, either None or QoS policy group where you can set the traditional Min and Max IOPS, or performance service level where from the dropdown, you select an adaptive QoS level.

So the meaning of ‘None’, that first option, changed in ONTAP 9.4. Before 9.4, when there was just traditional QoS available in your Storage Capability Profiles, it set a traditional QoS policy with no limit. That’s very intuitive. That’s what you would expect that it means, but from 9.4, what it actually does has changed.

From 9.4, it sets an adaptive QoS policy of value, which is not really intuitive. The reason for this is because adaptive QoS should be set on all VVols so that balanced placement can make a better decision. So on all VVols, we do want to have an adaptive QoS policy applied.

NetApp engineering decided that if somebody says ‘none’ on the performance page, then they don’t really care what performance that virtual machine gets. So, the lowest adaptive QoS value of value is what is applied. An adaptive QoS policy of value, however, can limit performance down to 75 IOPS for small datastores below 1 terabyte. Because it’s adaptive QoS, the amount of IOPS that the volume gets depends on the size of the volume.

If it’s a very small volume, it can end up with very few IOPS, and obviously, that can affect performance. If you want to make sure that that does not happen, and you do want no limit, then set a traditional QoS high Max IOPS value.

With your VVols, you can set the adaptive QoS value. You can also still set the traditional QoS value as well. It doesn’t have to be adaptive for VVols. It can also be traditional. For traditional datastores, it is traditional or nothing because adaptive is not supported on those.

Storage Capability Profiles – Attributes (FAS)

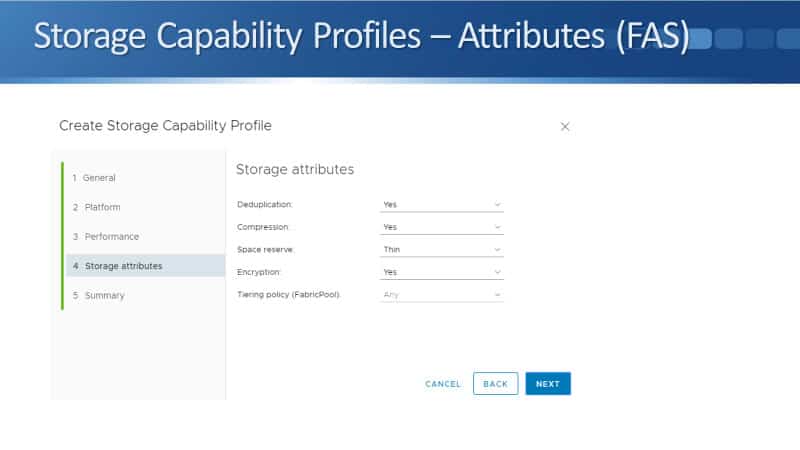

Moving on to the next page, which is the storage attributes. In here, you specify whether deduplication is enabled or not, whether compression is enabled or not, whether the space reserve is thin or thick provisioning, whether encryption is enabled or not, and also the fabric pool setting.

Those are your options for FAS systems. With FAS systems, the fabric pool is grayed out. It’s always going to be set to any, but you can specify the setting for the rest of them.

Storage Capability Profiles – Attributes (AFF)

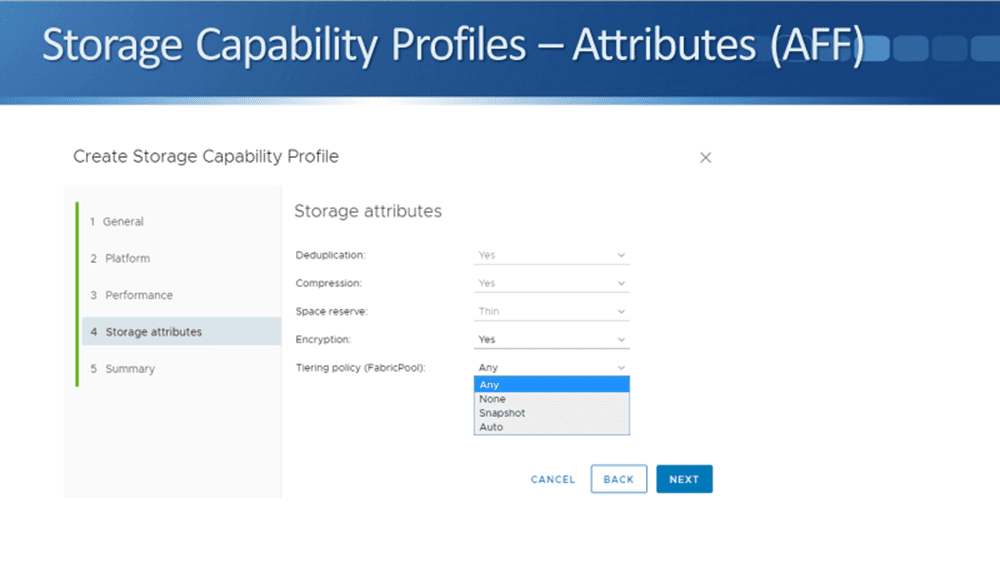

If you set the platform type as AFF, then you’re going to have different options here. The fields are the same, but what you can set has changed.

Deduplication and compression are enabled by default, and you can’t change that. The space reserve is going to be thin, and you cannot set it to thick. For encryption, you can specify whether you want that to be enabled or not, and you do have different options for fabric pool when you’re using AFF.

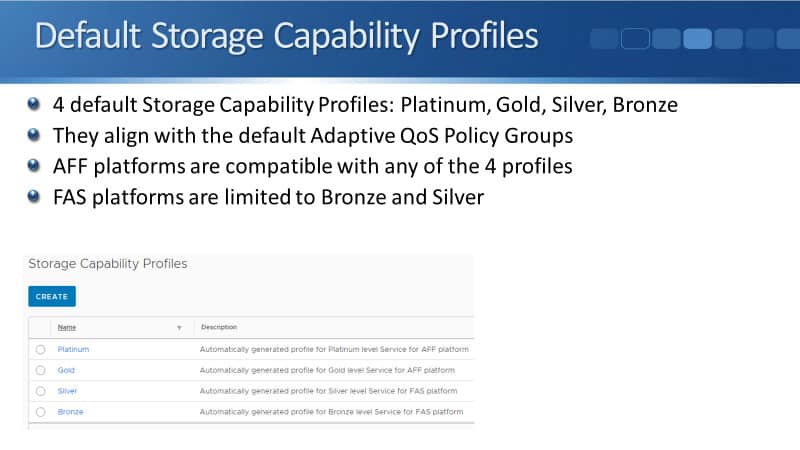

Default Storage Capability Profiles

There are four Storage Capability Profiles built into the system by default after you install the VSC plugin. Those are Platinum, Gold, Silver, and Bronze, and they align with the default adaptive QoS policy groups. When you select Platinum, that’s going to choose the extreme adaptive QoS policy group. When you Gold, that’s going to set performance.

When you set Silver, that’s going to set value, and Bronze from version 9.4 does also set value as well. AFF platforms are compatible with any of the four profiles. FAS platforms are limited to Bronze and Silver because they don’t have enough performance to get up to the Gold and the Platinum level.

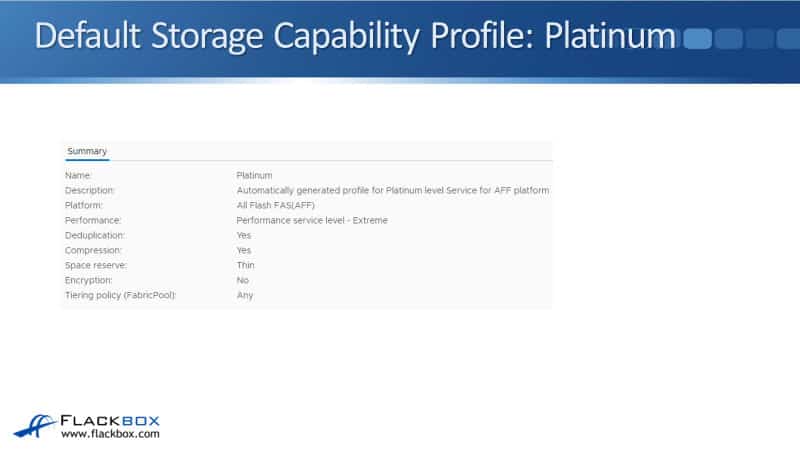

Default Storage Capability Profile: Platinum

Let’s have a look at the settings in those default profiles. For Platinum, the platform’s going to be AFF, and the performance is extreme. Deduplication and compression are enabled, space reserve is thin, encryption is turned off, and fabric pool is going to be set to any.

Default Storage Capability Profile: Gold

Then for Gold, it’s exactly the same settings apart from the performance level as performance rather than extreme. Those are the built-in profiles there for the AFF platform. You can also create your own custom profiles as well. For example, if you wanted to turn encryption on, you could do that with a custom profile.

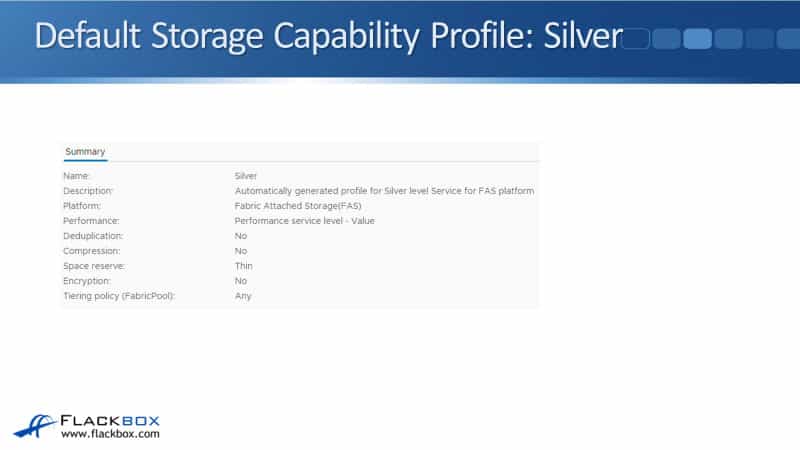

Default Storage Capability Profile: Silver

If you choose the default Storage Capability Profile of Silver, that is for the FAS platform, and deduplication and compression are turned off by default. Space reserve is thin, encryption is turned off, fabric pool is going to be any, and the performance service level is value.

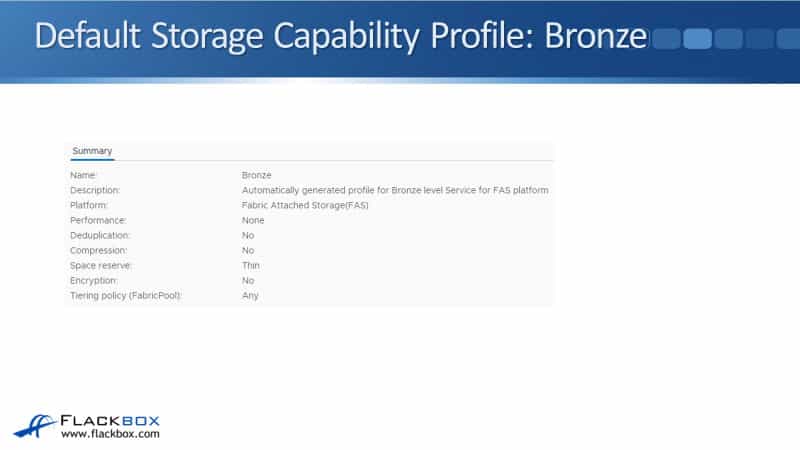

Default Storage Capability Profile: Bronze

If we have a look at bronze, you’re going to see that performance is none which ends up actually also setting the value of value as well. The other settings are the same as they were for Silver.

Deduplication and compression are no, space reserve is thin, encryption is no, and the tiering policy for fabric pool is any. Again, if you wanted to, you could create your own custom profile if you want to enable deduplication and compression, for example.

SCPs and Datastore Provisioning

Now we’re getting to the point where we’re going to actually use the profile. What you can use the profile for is when you provision a datastore on your ONTAP storage for VMware through VSC. When you do that, there’s a dropdown to select the Storage Capability Profile for the datastore, and the datastore will be created with the capabilities specified in the SCP.

For example, if you provision an NFS traditional data store, and you choose a storage capability profile where the space reserve is set to thin provisioning, then what’s going to happen is on your ONTAP storage system, it’s going to create a volume, and it’s going to enable NFS access to that volume. It’s also going to be a thin provisioned volume, and that’s going to be mounted as a datastore for your ESXi clients.

VVol datastores can span multiple volumes. Your traditional datastores are always going to be one volume. VVol datastores can be one volume, or they can be multiple volumes. Multiple Storage Capability Profiles can be selected when provisioning a VVol datastore, and you can specify which volumes to create as part of a VVol datastore and which SCP to apply to each volume.

For example, you could configure a VVol datastore from the VSC. You could say that you want it to be made up of four volumes, and you could have two of the volumes with deduplication turned on, and you could have two of the volumes with deduplication turned off.

When you provision that datastore, you specify how many volumes you want it to be made up of. You specify the size you want the volumes to be. You also specify the Storage Capability Profile on the volumes too, and the different volumes can have a different profile.

SCPs and Virtual Machine Deployment

The Storage Capability Profiles in VSC can be mapped to VM Storage Policies in vSphere for Storage Policy-Based Management. If you want to use the profile when you provision the Virtual Machine, you have another component, which is the VM Storage Policy.

This is configured on a different page in the vSphere client than the Storage Capability Profiles. The Storage Capability Profile is configured on the VSC plugin page. The VM Storage Policy is configured on the policies page in the vSphere client.

The VASA provider translates between ONTAP APIs and VMware APIs. A VM Storage Policy should be created in vSphere for each Storage Capability Profile, and it should reference the SCP by name.

So when you provision the datastore on your ONTAP storage, you can do that with the Storage Capability Profile from VSC. Later, when you provision your virtual machines onto those datastores, you can specify a profile there but that uses the VM Storage Policy, which is on a different page but is directly mapped to the Storage Capability Profile in VSC.

Let’s say that you’re using the Platinum and Gold Storage Capability Profiles. What you would do is you would go to the VM Storage Policies page in the vSphere client, and you would create two VM Storage Policies, also called Gold and Platinum. The Platinum one would map to the Platinum SCP, and the Gold one would map to the Gold SCP. Therefore, you have that one-to-one linkage there.

When you create a virtual machine, you can specify its vSphere VM Storage Policy, and a list of compatible traditional and VVol datastores to choose from will be shown. Whatever datastore you’ve got configured on the storage will be available as long as they are compatible. Once the virtual machine is provisioned, the VASA provider continues to check compliance and an alarm is generated if a VM goes out of compliance.

For example, let’s say that we have got a couple of Storage Capability Profiles in VSC. One of them has got high performance QoS, and it’s configured as thin provisioning. We’ll call that the thin SCP. Another one is configured as low performance for a storage QoS, and it’s configured with thick provisioning. We’ll call that the thick SCP.

You would then go and create a couple of VM Storage Policies as well. You would call one Thick, which would map to the thick SCP. You would call the other one Thin, and that would map to the thin SCP.

Then when you provisioned a virtual machine, you would specify the VM Storage Policy that you wanted to use, either Thick or Thin. If you specified Thin, then it would go into the thin datastore. If you specified thick, it would go into the Thick datastore.

What this does is make it easy that when you’re provisioning your virtual machines, you just click on the drop down through the VM Storage Policy, and you can specify the storage capabilities for it there. It saves you having to do it on a one-to-one basis for each virtual machine.

Different VVols of the same virtual machine can be assigned different SCPs. With traditional datastores, you can still use your VM storage policies, and it’s going to go into the one volume for that particular datastore. If you choose a VVol datastore, you can have multiple volumes with different storage capabilities. You can give different capabilities to the different VVols of different virtual disks that make up that virtual machine if you want to.

For example, maybe you have got a database, and one of the VMDK files for the database requires high performance, another one requires a low performance. You could put that virtual machine in the same VVol datastore. You could have one virtual disk with high performance, another virtual disk with low performance.

Storage Capability Profile Creation

Okay, so how do we create our Storage Capability Profiles? You can use one of the default SCPs, or you can create your own. You can create your own one from scratch. You can go to the Storage Capability Profile page, and you can say, add New, and then you will get that blank wizard where you can fill in all of the different capabilities yourself.

You can also clone and edit an existing default SCP or a custom one that you’d created earlier. You can also automatically generate a profile through VASA Provider analysis of an existing traditional datastore. Maybe you’ve already configured traditional datastores, and you didn’t have VSC installed in vSphere yet.

And then later, you installed VSC, and you went to start using Stores Capability Profiles. You’ve also got the VASA Provider enabled as well, but when you get to that point, you can use the VASA Provider to scan the capabilities of your existing datastores, and it will automatically generate a Storage Capability Profile based on that.

Because you’ve got the VASA provider there, the vSphere client, it can query the ONTAP storage to find out the capabilities of storage. For example, does it have duplication enabled? Is it thin or thick provisions, and so on. It can query the capabilities of that datastore, and then it can automatically generate a Storage Capability Profile based on those discovered capabilities.

Storage Mapping

If you do have existing datastores, they’ll typically use the VASA Provider to automatically generate the Storage Capability Profile. You can also manually map a Storage Capability Profile to an existing traditional datastore. You could use one of the default profiles, or you could create your own custom profile, and then you could map that to an existing traditional datastore.

Now, if you did not configure the Storage Capability Profile with matching settings for that datastore, then you will get an alarm about that because the VASA Provider monitors datastores for compatibility with your SCP and generates an alarm if any are out of compliance.

If you did do a manual mapping and got it wrong, you would get an alarm for that. Also, if you created a datastore in VSC using a particular Storage Capability Profile, and then an ONTAP administrator went and manually changed one of the settings, you would get an alarm about that as well because it would no longer be compliant. A datastore can match multiple profiles, but it can only be associated with one profile.

Policy-Based Management Summary

Finally, let’s summarize Policy-Based Management. There are two elements to Policy-Based Management. There is vSphere’s VM Storage Policy, which is under Policies and Profiles, and there are VSC’s Storage Capability Profiles. The normal order of operations when you’re using VSC is you will create the Storage Capability Profile where you specify the characteristics that you want the storage to have.

You will then use VSC to provision the datastore, and when you provision the data store, you specify the Storage Capability Profile you want to use, so it gets those characteristics. Then you map the VSC Storage Capability Profile to a VM Storage Policy. You create a new VM Storage Policy, and when you do that, you specify the VSC Storage Capability Profile that it is mapped to. You’ve got that one-to-one relationship there.

Then when you provision your virtual machines, it’s VM Storage Policy that you select when you’re provisioning the virtual machine, and then it will be put into the correct datastore based on that. vSphere’s VM Storage Policy references VSC’s Storage Capability Profile.

Additional Resources

Configure Storage Capability Profiles: https://docs.netapp.com/us-en/vsc-vasa-provider-sra-97/manage/task-create-storage-capability-profiles.html

Creating and Editing Storage Capability Profiles: https://library.netapp.com/ecmdocs/ECMLP2843698/html/GUID-2D5B42CC-5453-426C-8D27-AA48151AD36A.html

Mapping Datastores to Storage Capability Profiles: https://docs.netapp.com/us-en/ontap-tools-vmware-vsphere-910/configure/task_map_storage_to_storage_capability_profiles.html

Storage Capability Profiles and VASA Provider: https://library.netapp.com/ecmdocs/ECMP12405937/html/GUID-8A74359A-0AB9-4378-ABEB-08FF410B8B51.html

Libby Teofilo

Text by Libby Teofilo, Technical Writer at www.flackbox.com

Libby’s passion for technology drives her to constantly learn and share her insights. When she’s not immersed in the tech world, she’s either lost in a good book with a cup of coffee or out exploring on her next adventure. Always curious, always inspired.