In this NetApp tutorial, you’ll learn about snapshot scheduling strategies. That’s when you should take snapshots, and how long you should retain them for, and how you should set the snapshot copy reserve. Scroll down for the video and also text tutorial.

NetApp Snapshot Scheduling Strategies Video Tutorial

Rodney Banipal

I am happy to say that I have passed the NetApp NCDA exam. Your video course was very informative to understand how NetApp works. I have practiced a lot in my lab and that led me to get a NetApp job. Without your course I would not be here so a big thank you goes to you. I could not have done it without your course.

Snapshot Scheduling Strategies

There's a trade-off between retaining enough snapshot copies and retaining storage capacity because snapshots do take up space. You want to have enough snapshots going back far enough in time that it's convenient to do restores from them, but you don't want to have so many that they're using up too much disk space.

If users rarely lose files or typically have noticed lost files straight away, you can use the default snapshot copy schedule, which keeps 10 snapshots and that is suitable for most environments. However, not all environments are typical so you do need to tweak this sometimes.

Let's talk about why you would need to do that and where. On many systems, only 5% to 10% of the data changes each week, so the default schedule consumes 10% to 20% of disk space. This is because it keeps two weekly snapshots so 10% per week would equal 20% if you're keeping two of them.

If users commonly lose files or do not typically notice lost files right away, you should delete the snapshot copies less often than you would if you used the default schedule. In that case, if you don't have a snapshot, you can still do a restore from your long-term offsite backup like SnapVault, or from tape, but it's more convenient to do it from snapshots.

So, if you are having to do restores quite often, or going back a bit further in time, it is more convenient if you've got snapshots to do that with.

You can create different snapshot copy schedules for different volumes. Therefore, you might have different volumes that have got different rates of change, or which have got different amounts of data in them, or which are being used for different purposes and need restores more or less often. You can use different policies that are relevant for each specific volume.

On a very active volume, one that's got a lot of changes in data, you should schedule snapshot copies every hour and keep them for just a few hours, or turn off snapshot copies. Snapshots take up space as the active file system changes.

If there is a lot of changes to the active file system, your snapshots are going to take up more space quicker, so you want to have less of them and keep them for a shorter period of time.

After the volume has been in use for some time, you should check how much disk space the snapshot copies consume and how often users need to recover lost files, and then adjust the schedule as necessary.

Obviously, you need to wait for a complete rotation of the snapshots. For example, if you're keeping 10 snapshots in total, it's going to take a short amount of time until you've got those first 10 snapshots. There's no point in checking anything until that.

But once you've got those 10 snapshots, because you're always going to keep 10 and they always go back to the same period in time, the amount of space that is being used by your snapshots should stay relatively the same.

Now, if it's a brand new volume, it's going to take some time for things to settle down and become constant. So wait some time, see what's happening, and then adjust your policy as necessary. This is also something that you should monitor every once in a while because the usage patterns of a particular volume can change over time.

Snapshot Copy Reserve

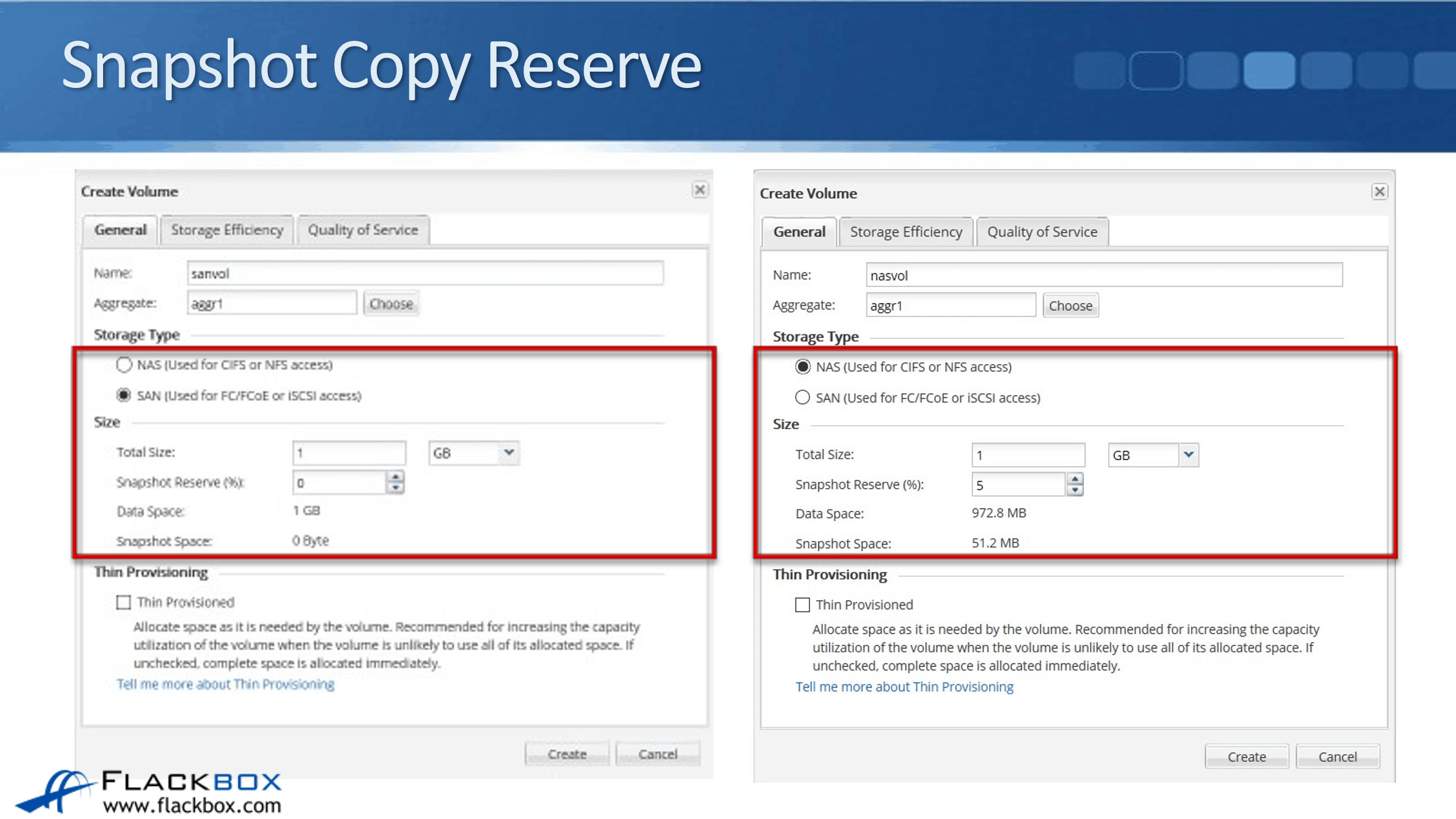

The next thing to talk about is the snapshot copy reserve. First, I want to show you what the defaults are. In the example below, you can see that on the left, I have created a SAN volume.

Remember that automated scheduled snapshots are turned off for SAN volumes, and also, because of that, you can see that the snapshot reserve is set to 0%. With your SAN volumes, it's recommended to have these managed by SnapCenter.

For NAS volumes, however, they can be managed by the ONTAP operating system itself. So with NAS volumes, automated scheduled snapshots are turned on with that default schedule by default. The default snapshot reserve is 5%.

Snapshots are saved in that snapshot reserve space. New snapshots can, however, spill over and use space in the active file system if the copy reserve space is full with existing snapshots. The active file system cannot consume the snapshot copy reserve space.

If you've created a new NAS volume and you've gone with the defaults, and if you've got a default reserve space of 5%, as you create snapshots, they are going to go into that 5% space. The remaining 95% of the space is going to be used by the active file system.

If, however, you're creating more snapshots and you're already using up that 5% space, and you need to create new snapshots, then those extra snapshots will go above the 5%. They will start using the space that was there for the active file system.

This is a one-way thing though, the active file system cannot use the space of a snapshot copy reserve. The snapshot reserve is reserved purely for snapshot copies. So with the default, the active file system can use a maximum of 95% of the space of the volume.

If we've created a volume that is 1 gigabyte in size, the snapshot reserve space is 5%. That leaves 972 megabytes available for the active file system, and 51 megabytes being used by the snapshot reserve.

When enough disk space is available for snapshot copies in reserve, deleting files in the active file system frees disk space for new files, while the snapshot copies that reference those files consume only the space in the reserve.

If not enough space is reserved for snapshots, it's possible that deleting files in the active file system will not free space, as their blocks are locked by snapshots.

What this means is, say that you do have that reserve space of 5%, but your snapshots have gone above that. Let's say that snapshots are actually taking up 10% of the space in the volume. Well, if the volume is getting full, you might have a user that sees that they see that the volume is getting full, so they start deleting some files and folders in the active file system.

But what can happen is, if the blocks that were being used by those files and folders are also locked by snapshots, the user is deleting files and folders, and then they go to check and they see that it hasn't freed up space in the active file system.

They'll be thinking, "Well, I saw the volume was getting full, I've gone and deleted a bunch of files and folders, but it's still full. What's going on?" That would, obviously, really confuse them and you don't want that to happen.

You want to make sure that the snapshot copy reserve is a little bit more than the actual amount of space that is being used by snapshots. This makes sure that the snapshots are always in that snapshot copy reserve space.

They haven't spilled over into the active file system. If a user does make changes in the active file system and they do delete files and folders, they will see that as freed up disk space when they do it. It is important to reserve enough disk space for snapshot copies so that the active file system always has space available to create new files or modify existing ones.

On the other hand, don't reserve too much disk space for snapshot copies because extra space in the snapshot reserve is wasted. It cannot be used by the active file system.

For example, if the overhead for snapshot copies is only 10%, that you set a snapshot copy reserve of 20%, then that extra 10% there, the difference between the 10% to 20%, that 10% of space is not going to be used for anything.

It's not being used by snapshots because it turns out they're not going above 10%. Also, it can't be used by the active file system. That's 10% of your disk space there that you've just blocked off and made unusable.

If the pattern of change holds, in this example, a reserve of 12% to 15% provides a safe margin to ensure that deleting files frees disk space while the active file system is full.

What to do is to monitor how much space in each volume your snapshots are taking up, and set the copy reserve to be a few percentage points above that. For example, if the snapshots are taking up 12% of the disk space, then set reserve to be about 15% or 16%, somewhere around there.

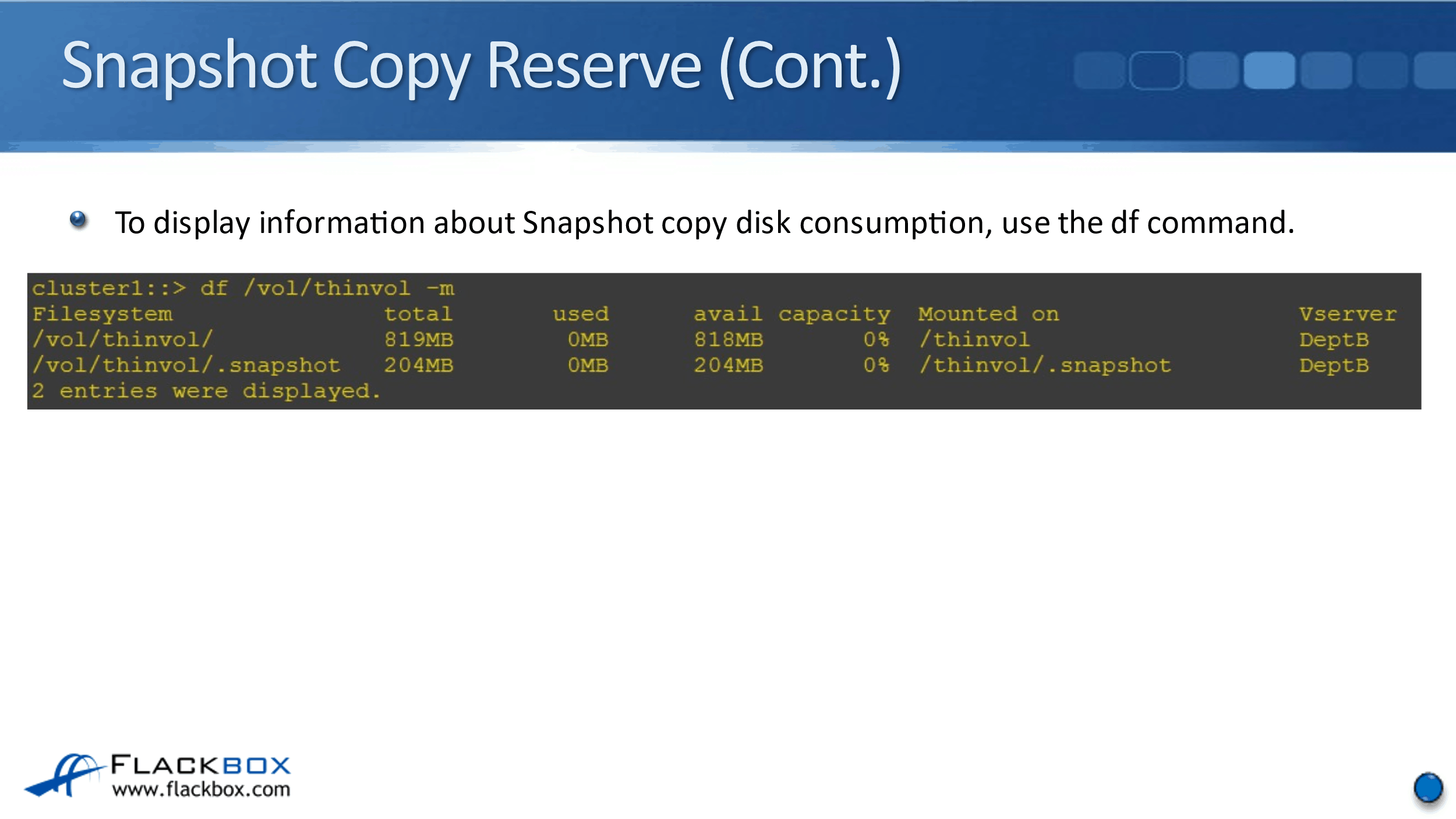

Finally, to display information about snapshot copy disk consumption, you can use the df command. You can see here, I've said:

df /vol/thinvol -m

The output I get there, you see /vol/thin vol/, and I can see that that is the active file space. /vol/thinvol/.snapshot is the snapshot copy reserve space. From this output, I can see that I created a one gigabyte volume called thinvol, and the snapshot reserve is currently at 20%.

Right now, I can see that the available size and the total size are the same, that's because I don't have any data in here yet. As I start putting data into that volume, the total will always remain the same, and then the available is going to change.

That's how I can monitor how much space is being used by my snapshots. How much space is left available is in bold for snapshot copy reserve, and in the space for the active file.

Additional Resources

Creation of Snapshot Copy Schedules: https://library.netapp.com/ecmdocs/ECMP1635994/html/GUID-5875FA9D-25F4-4468-9FB8-93707097EBEA.html

Working with Snapshot Copies: https://library.netapp.com/ecmdocs/ECMP1635994/html/GUID-8D7D39E4-AC1D-4C7A-8DE4-6DF8B88618DB.html

Understanding Snapshot Copy Reserve: https://library.netapp.com/ecmdocs/ECMP1196991/html/GUID-901983DC-6BF0-4C84-8B55-257403340C8B.html

Libby Teofilo

Text by Libby Teofilo, Technical Writer at www.flackbox.com

Libby’s passion for technology drives her to constantly learn and share her insights. When she’s not immersed in the tech world, she’s either lost in a good book with a cup of coffee or out exploring on her next adventure. Always curious, always inspired.