In this NetApp tutorial, you’ll learn about the initial peering setup for SnapMirror and SnapVault when replicating between different clusters. Scroll down for the video and also text tutorials.

NetApp SnapMirror and SnapVault Initial Peering Setup Video Tutorial

Joseph Dermody

I began working for NetApp in 2007, they should make your course a mandatory prerequisite for all of their engineers. The lectures are concise and to the point and the extensive catalog of content you provide is unrivaled.

Nothing I’ve come across will prepare someone for a NetApp certification like your one-stop-shop course.

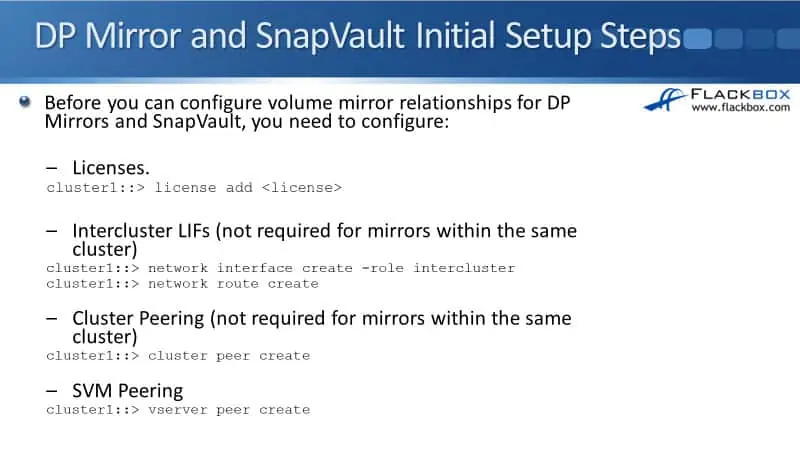

DP Mirror and SnapVault Initial Setup Steps

The first thing that you need to do is to add your licenses. In the later versions of ONTAP, you only need to add SnapMirror licenses on your source and destination clusters. A license is used for both SnapMirror and SnapVault.

Now, if you’re using SnapMirror Synchronous, you must add the additional SnapMirror Synchronous license. The command to add a license is ‘license add’. The next thing that you need to do is to add your intercluster logical interfaces.

For the replication traffic going between the clusters, it can use your normal LIFs that are being used for your client data access. It would be best if you used dedicated intercluster and LIFs for this.

The command to create the logical interfaces is exactly the same as if you were doing normal LIFs for client data access. The difference is that you need to specify ‘-role intercluster’.

Now, if you are replicating within the same cluster, you don’t need Intercluster LIFs. But by far, more commonly, it will be between different clusters when you’re using SnapMirror and SnapVault. So, you use the ‘network interface create’ command to create the LIFs.

You can use a subnet to specify the IP addresses on your LIFs. If you’re using a subnet, the default gateway will be configured there for the routing. If you’re not using a subnet, you’ll need to configure the default gateway separately with the ‘network route create’ command.

Next up, you need to do the cluster and the SVM peering. The peering, again, is for when the replication is going between the two different clusters. So, to peer the clusters first, you say ‘cluster peer create’, and then to peer the SVMs, you say ‘vserver peer create’.

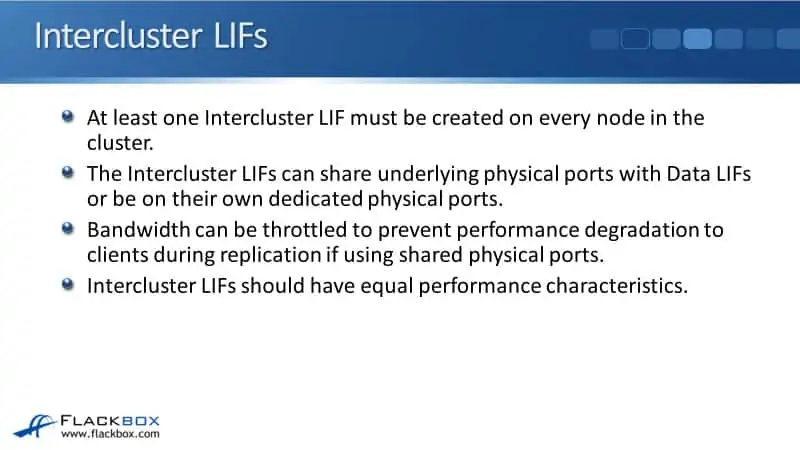

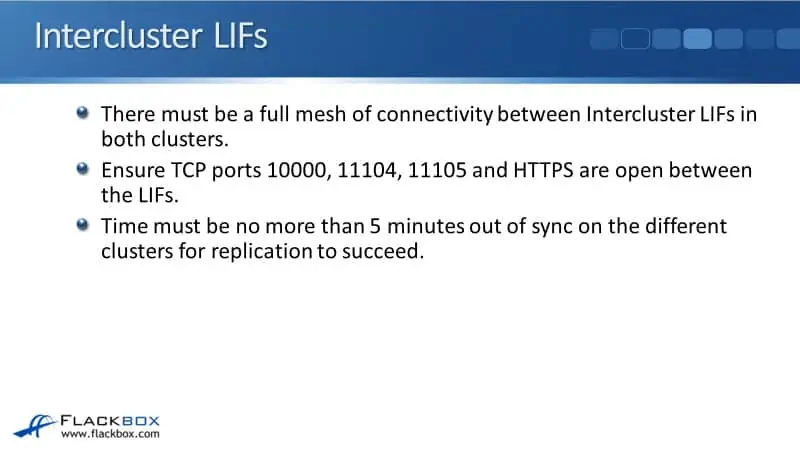

Intercluster LIFs

Starting with configuring Intercluster LIFs, at least one Intercluster LIF must be created on every node in the cluster. The Intercluster LIFs can share underlying physical ports with Data LIFs or be under their own dedicated physical ports.

The bandwidth can be throttled to prevent performance degradation to clients during replication if using shared physical ports. If you’ve got client data access running on the same physical ports, and whenever a replication runs, you don’t want that to be taking too much bandwidth away from the client data access, then you can throttle your Intercluster replication traffic.

The other thing you can do is just put the Intercluster LIFs on separate, dedicated physical interfaces. It will not affect your client data traffic, and your Intercluster LIFs should be running on the same type of underlying physical ports with equal performance characteristics.

There must be a full mesh of connectivity between Intercluster LIFs in both clusters. So in both clusters, you need to configure an Intercluster LIF on every node and ensure that all of those LIFs have connectivity to each other.

Ensure TCP ports 10000, 11104, 11105, and HTTPS are open between the LIFs. If you’ve got firewalls between the two clusters, make sure those ports are open for the intercluster relocation traffic.

For replication to succeed, the time must be no more than five minutes out of sync under different clusters. You should use an NTP server in your environment to ensure that your clusters are synced simultaneously, so replication takes no more than five minutes to succeed.

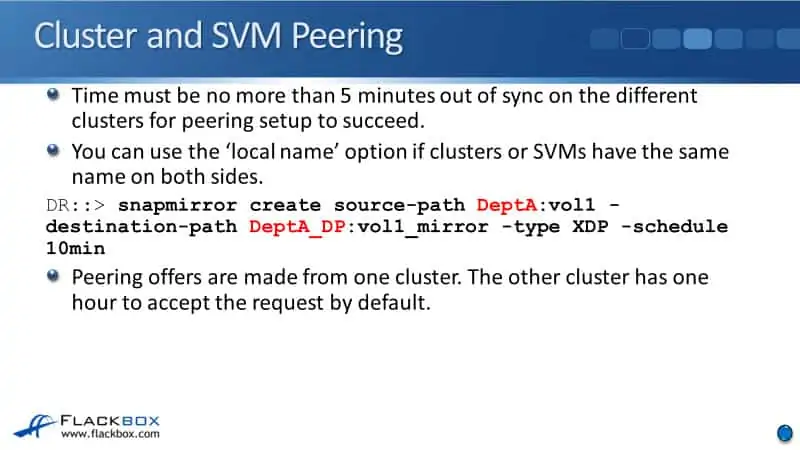

Cluster and SVM Peering

Also, for the cluster and SVM peering, there must be no more than five minutes time difference between the two clusters. Now, you can use a local name option if clusters or SVMs have the same name on both sides. This capability came out in later versions of ONTAP.

In previous versions of ONTAP, your SVMs on both sides required having unique names. So, you couldn’t have SVMs with the same names on both clusters.

The reason for that is in the SnapMirror create command for the paths, you have to specify the SVM name and the volume name. That’s both for the source path and for the destination path. You don’t specify the cluster name.

For example, we’re configuring this on the destination side. If the destination cluster had an SVM, which was named DeptA, and then you’re trying to replicate from a source SVM called DeptA, it would be confused, and it wouldn’t know which one you’re talking about.

Therefore, in previous versions, your SVMs and all clusters that you were doing replications from the SVMs always need to have unique names to stop that confusion.

In later versions of ONTAP, capabilities are added to use the ‘local name’ option. Using the same example again, if on this destination cluster I’ve got an SVM which is also called DeptA, then what I can do is I can say that the local name for DeptA on the remote cluster is DeptA_Source, for example. That allows you to configure a different name, which is known locally.

Obviously, this would make things confusing. You would only do this if you had to. If you were in a situation where the clusters had been created before, you hadn’t realized that you were going to be replicating between them, you ended up with SVMs with the same name, and it’s just really not possible to change the SVM name, there it is a workaround using the ‘local name’ option.

But if you have the opportunity to do proper planning beforehand, when planning out your clusters and the SVM naming, make sure that the SVMs have unique names across all the different clusters.

When configuring the peering, the offers are made from one cluster, and the other cluster has one hour to accept the request by default. So you put the peering offer in on one side, and then once that’s been done on the other side, you have to accept that offer. You have one hour to do so by default, but you can specify a long-time period if you want to.

NetApp SnapMirror and SnapVault Initial Peering Setup Configuration Example

This configuration example is an excerpt from my ‘NetApp ONTAP 9 Complete’ course. Full configuration examples using both the CLI and System Manager GUI are available in the course.

Want to practice this configuration for free on your laptop? Download your free step-by-step guide ‘How to Build a NetApp ONTAP Lab for Free’

Additional Resources

Cluster and SVM peering overview: https://docs.netapp.com/us-en/ontap-sm-classic/peering/

Create the SnapMirror relationship (Beginning with ONTAP 9.3): https://docs.netapp.com/us-en/ontap-sm-classic/volume-disaster-prep/task_creating_snapmirror_relationships_93_later.html

Create a SnapVault relationship (Beginning with ONTAP 9.3): https://docs.netapp.com/us-en/ontap-sm-classic/volume-backup-snapvault/task_creating_snapvault_relationship_93_later.html

NetApp SnapVault Tutorial: https://www.flackbox.com/netapp-snapvault-tutorial

Libby Teofilo

Text by Libby Teofilo, Technical Writer at www.flackbox.com

Libby’s passion for technology drives her to constantly learn and share her insights. When she’s not immersed in the tech world, she’s either lost in a good book with a cup of coffee or out exploring on her next adventure. Always curious, always inspired.