In this NetApp training tutorial, you’ll learn about thin provisioning for SAN protocols. You can thin or thick provision the LUN in the volume as well as thin or thick provisioning the volume in the aggregate. You’ll also learn about the fractional reserve, why we need it, and how it works. Scroll down for the video and text tutorial.

NetApp LUN Space Reservation and Fractional Reserve Video Tutorial

Alejandro Carmona Ligeon

This is one of my favorite courses that I have done in a long time. I have always worked as a storage administrator, but I had no experience in Netapp. After finishing this course, even co-workers with more experience started asking me questions about how to set up or fix things in Netapp.

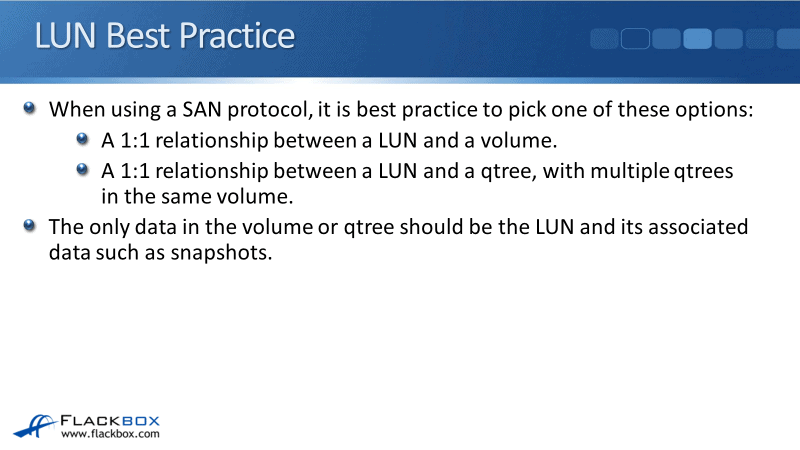

LUN Best Practice

When using a SAN protocol, it's best to practice to pick one of these options:

- One-to-one relationship between a LUN and a volume. It means when you create a LAN, you have a volume specifically dedicated for that LUN.

- One-to-one relationship between a LUN and a qtree with multiple qtrees in the same volume. When you create a LUN, you have qtree dedicated just for that LUN with nothing else in it, but you can have multiple LUNs in qtrees in the same volume.

Now the reason that you would have multiple LUNs and qtrees in the same volume or the reason why this used to be done fairly regularly was for deduplication.

If you had a lot of LUNs which had similar information in there but it could be deduplicated because deduplication work out the volume level, it would be a good idea to put all those in the same volume. When you did that you would put each one in its own dedicated qtree.

But now in the later versions of ONTAP, we can do deduplication across different volumes in the same aggregate. So now you can go with having a one-to-one relationship between the LUN and the volume.

If you want to have deduplication, you can put those LUNs in the same aggregate then, you would still get the deduplication. It's just easier for administration if you have that one-to-one relationship between the LUN and the volume.

Again, the only data in the volume or the qtree should be the LUN and it's associated data such as snapshots. You don't mix any other data into that volume or qtree that you've got the LUN in.

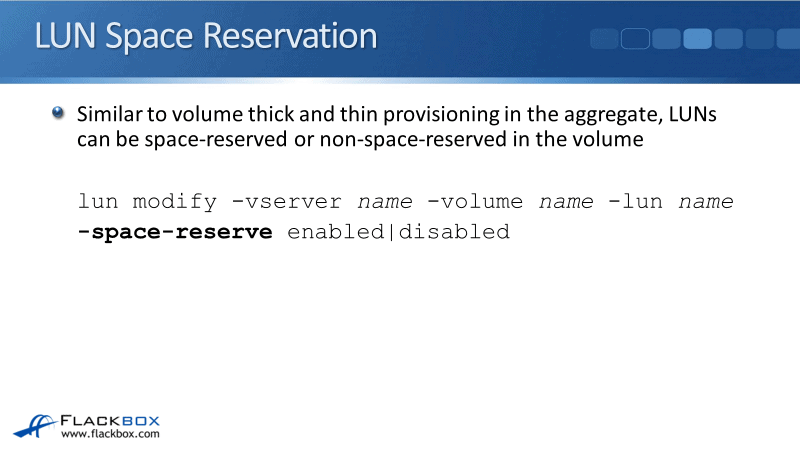

LUN Space Reservation

Similar to volumes thick and thin provisioning in the aggregate, LUNs can also be:

- Space-reserved basically means that LUN is thick provisioned.

- Non-space-reserved means that the LUN is thin provisioned.

The command for doing this is:

lun modify -vserver name -volume name -lun name -space-reserve enabled|disabled

The field would be -space -reserve and you can either enable to thick provision the LUN or disable to thin provision the LUN.

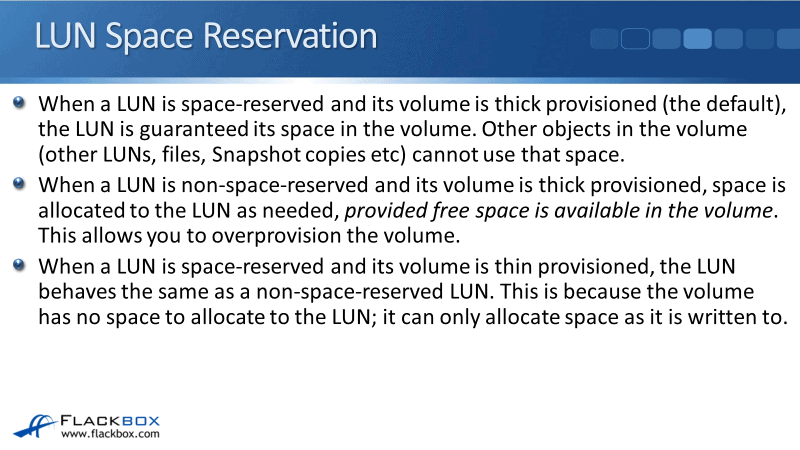

A LUN is space-reserved and its volume is in thick provision, both the LUN and volume are thick provisioned by default. The LUN is guaranteed its space in the volume and the volume is guaranteed at space in the aggregate and this space is reserved for the LUN.

When a LUN is non-space-reserved, so it’s thin provisioned, and its volume is thick provisioned, the spaces were allocated to the LUN as needed. The provided free space is available in the volume and this allows you to over-provision the volume.

So when you have got a thick provision volume and you've got thin provision to LUNs in there. Let's say that the volume is sized 1TB, You could have four 500GB LUNs in there. You can provision more space to your LUNs than what is available in the volume.

When a LUN is spaced-reserved and its volume is thin provisioned, the LUN behaves the same as a non-space-reserved LUN. That's because the volume has no space to allocate to the LUN. It is thin provisioned and it can only allocate space as it is written to.

Now you might be thinking, “If I've got that one-to-one relationship between volume and the LUN, why do I need to thick provision the LUN?”

It is because the LUN is the only thing in the volume. It's not competing with anything else for that space. That would be true if there were no other objects in the volume.

If there were no other objects in the volume, then there wouldn't be any point in configuring thick provisioning for the LUN. The volume provision is saying we control whether the LUN was gone into the physical space in the aggregate or not.

There might be multiple LUNs in the volume. If you've got LUNs assigned to a qtree, and there's multiple LUNs in qtrees in that same volume, then all those LUNs are contending for the same space in the volume. So, the LUN provisioning saying is going to have an effect.

Also, even more likely is there's probably going to be snapshots in the volume and the snapshots are taking up space and not volume. If you thick provision the LUN, it means that the LUN is guaranteed that space and that the snapshots cannot be into it.

Space Reserve LUNs and Snapshot

When a LUN is space-reserved and its volume is thick provisioned, which is the default, then the LUN is guaranteed its space. When data is first written to the LUN, then there's guaranteed to be a space for it. However, we can still run into an issue here.

If snapshots are being taken, which it probably will be when blocks are overwritten or deleted, they will be locked in the snapshot and take up additional space and volume.

That's just the way that snapshots work. Whenever we delete a fail or we overwrite a fail where that's locked in the snapshots, the snapshot is going to lock those blocks and start to take up space.

Now, overwrites can fail when we're using a LUN because they would cause the volume to become full, even when the LUN is space-reserved and its volume is thick provisioned. Even if you do have a space-reserved LUN and a thick provision volume, you can still end up with writes failing.

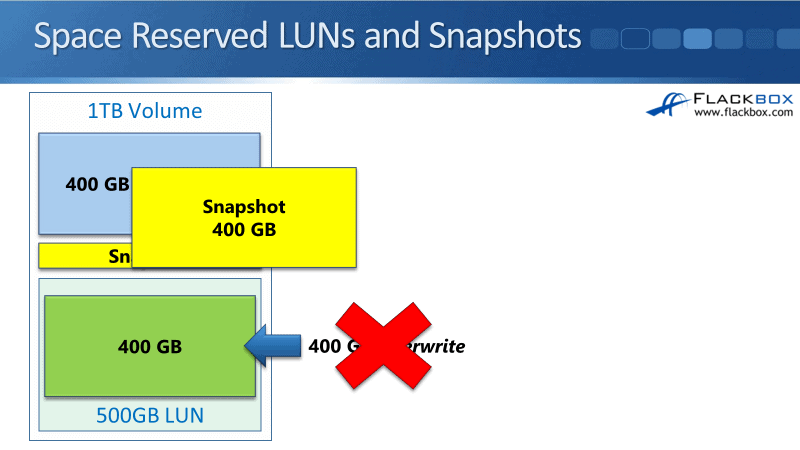

Let's say that we have got a 1TB volume. In that volume, we create a 500GB LUN and they're both thick provisioned. Then, we write 400GB to that LUN of data and then, we take a snapshot of that LUN of the volume.

When we take a snapshot, the size of the snapshot is zero because it's not locking any blocks that have been changed in the volume yet.

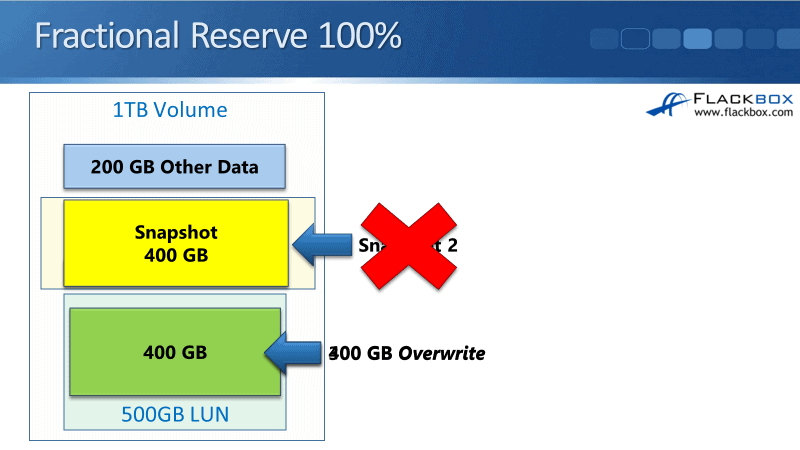

Now let's say that we've got 400GB of other data that we also write to the volume. It could be another LUN or it could be fails. Although, we should not be writing fails to that same volume. We then overwrite 400GB worth of the data in the LUNs. Thus, we are writing new data, we're editing those fails that are in the LUN, so we've got 400GB overwrite.

When that happens, all 400GB is going to take up space in the snapshot because it's locking those blocks. If you look now, we've got 400GB worth of data in the LUN.

We've also got 400GB of other data in the volume. The snapshot would take up 400GB of space. That's 1.2TB and we've only got a 1TB volume so that doesn't fit. It's not going to go so, the overwrite will fail.

So even when we've got thick provisioned volume and a thick provisioned LUN, overwrites can fail. The first writes to the LUN are always going to be successful. But it's still possible that overwrites can fail. Fortunately, we have got a fix for this called fractional reserve setting.

Fractional Reserve

Fractional reserve, also known as LUN overwrite reserve enables you to reserve space for snapshot copy overwrites for LUNs when all other space in the volume is used.

The fractional reserve must be configured to either zero or 100%, and not a value in between. It's either on or off, enabled or disabled. If you're wondering, "Well, why do I set it to zero or at 100%? Why don't I either just enable or disable it?"

The reason is that in previous versions of ONTAP, you could set it to a value between zero and 100%. The actual command for configuring is still configured as a percent. In the latest versions of ONTAP, it does have to be zero or 100.

The fractional reserve is set at the volume level, not at the LUN level. If it's set to 100% whenever a snapshot is taken, its maximum potential size is reserved in the volume. If it's set to 0% then it's not.

Fractional Reserve 100%

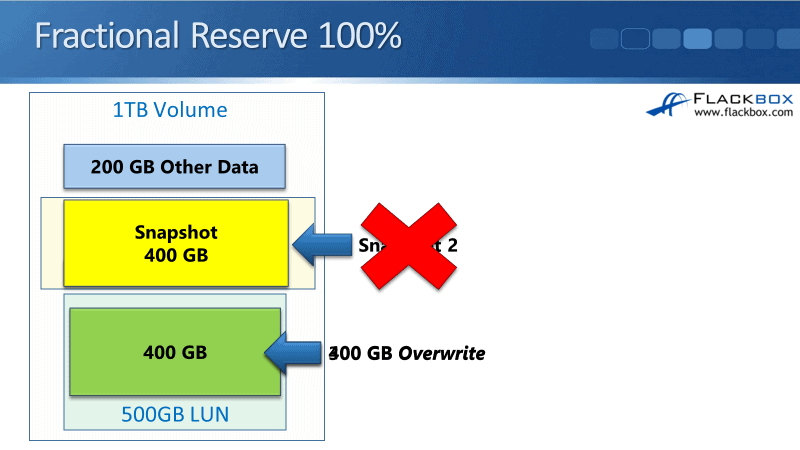

Using the same example again, we've got 1TB. Then, we clear a 500GB LUN in the volume and they're both thick provisioned. We write our 400GB worth of data into the LUN and we then take a snapshot. When we first take the snapshot, the size is zero because we don't have any blocks that are locked there yet.

Now when we take that snapshot, even though the snapshot hasn't grown in size yet, the maximum potential size of that particular snapshot would be 400GB. That is because that's how much data was in the LUN in the volume when we took that snapshot.

When the fractional reserve is enabled, set to 100%. Whenever you clear a snapshot, the maximum possible size of that snapshot could grow to is reserved in the volume and it can't be used by anything else.

.

Now, we could write other data to that same volume but we'd be limited to 200GB. We can't write any more of that because of the factional reserve. Then we do our 400GB overwrite again. Because we have got that space reserved for the snapshot, the snapshot can grow to a size of 400GB.

The snapshot will grow to 400GB because those blocks that were in place when a snapshot was taken has changed now. So, they are going to be used in the snapshot. You see that our overwrite does works by enabling the fractional reserve, it makes sure that overwrites are always going to work.

We could then go and write another 300GB worth of data to the LUN. Again, overwriting that data that will work just fine as well.

The snapshot is a point in time, a snapshot of what was in the fail system at the time we took the snapshot. It's read-only, so nothing changes. We can still do another overwrite, but snapshot stays the same.

if we then tried to take another snapshot, we've got 300GB worth of new data. If we were to take a snapshot too then, that would reserve 300GB of space and the volume. The 300GB is not available to that snapshot and it will not be taken.

When you enable the fractional reserve, that makes sure that you can always write to the active fail system of the LUN. However, it doesn't make sure that you can take another snapshot. Being able to actually write to the LUN is more important than being able to take snapshots.

Sizing

The 100% fractional reserve is not generally recommended because it requires significant additional space. But if we just took two snapshots after we'd written 700GB of data to the volume, we would need to reserve 700GB of space just for the snapshots.

You can see that this does take up a lot of additional space in the volume, thus, it's not generally recommended. Snapshot Autodelete and optionally Volume Autogrow is the preferred method to prevent overwrites failing due to the volume becoming full.

Rather than enabling fractional reserve, when we had that snapshot and we wanted to do overwrite, that's going to cause the volume to grow. If we didn't have Autogrow enabled or Snapshot Autodelete enabled, then it was going to fail.

If we do have Snapshot Autodelete enabled when we tried to send overrides to the LUN, it will delete the oldest snapshot as the volume is getting filled. Then, we'll still be able to do the overrides.

That does not require us to reserve all that extra space for the snapshots in the volume. That's generally the preferred way to do it rather than enabling the fractional reserve.

No matter how you configure thick or thin provisioning, space reservations, and fractional reserve on your volume and your LUN, you still need to size the volumes correctly to contain all data including a full snapshot rotation.

So if you're doing daily snapshots and you're keeping them for a week, figure out high much space that is going to take up. Also, figure out how much space is required for the LUN, add those together and you need to size your volume to that size.

What these different settings do is they allow you to obtain optimized space efficiency. It allows you to optimize everything while minimizing the risks of volumes or aggregates becoming full, but you should still size everything correctly in the first place.

Additional Resources

Enabling or Disabling Space Reservations for LUNs: https://library.netapp.com/ecmdocs/ECMP1196995/html/GUID-8A45E0EA-4221-4185-B201-37FF57BF3633.html

Fractional space reservation: https://library.netapp.com/ecmdocs/ECMP1649826/html/GUID-71F6449B-3FD7-4EEB-B927-C403CC58F469.html

About Fractional Space Reservation: https://library.netapp.com/ecmdocs/ECMP1217281/html/GUID-7DC46AD7-21C0-4738-AA82-BEE8A00AA33D.html

Click Here to get my 'NetApp ONTAP 9 Storage Complete' training course.

Libby Teofilo

Text by Libby Teofilo, Technical Writer at www.flackbox.com

Libby’s passion for technology drives her to constantly learn and share her insights. When she’s not immersed in the tech world, she’s either lost in a good book with a cup of coffee or out exploring on her next adventure. Always curious, always inspired.