In this Cisco CCNA tutorial, you’ll learn about Layer 4 of the OSI model, the Transport Layer. Scroll down for video and also text tutorial.

The Transport Layer Header, TCP, and UDP Video Tutorial

Michael Griffin

I have to send this to just say thank you! I just finished taking my CCNA exam and passed. Without your course, there is no way I would have been prepared for the exam. Can’t believe that I made it!

Layer 4 - The Transport Layer

The Transport Layer provides transparent transfer of data between hosts and is responsible for end-to-end error recovery and flow control. Flow control is the process of adjusting the flow of data from the sender to ensure that the receiving host can handle all of it.

If the sender is sending too quickly, maybe because we've got faster network connections on that side, and it's sending more than the receiving host can accept, then if flow control is enabled, the receiving host will have a mechanism to signal back to the sender, telling it to slow down.

Session Multiplexing

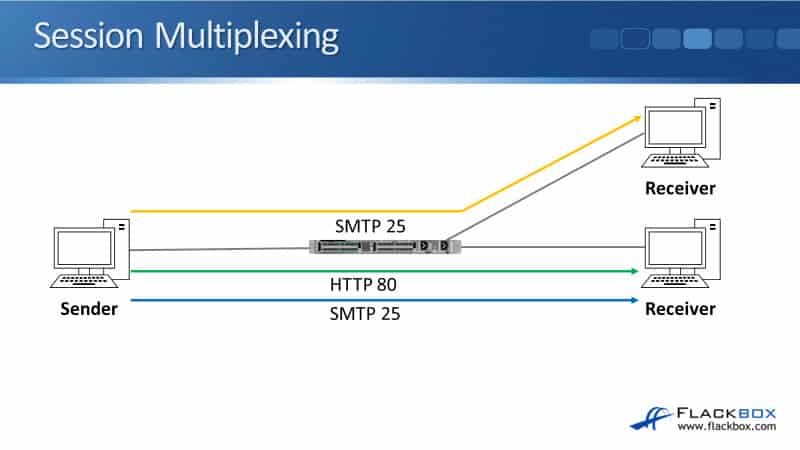

Another thing that is supported in Layer 4 is session multiplexing. This is a process by which a host is able to support multiple sessions simultaneously and manage the individual traffic streams over a single link.

In the example here, I've got a sender on the left and there's going to be a couple of receivers over on the right. The sender sends some email SMTP traffic to the top receiver on port 25, and it also sends some web traffic on HTTP port 80 to the bottom receiver. It's also sending email traffic on port 25 to the bottom receiver as well.

You can see from the sender on the left, we've got three sessions from it. The top receiver on the right, we've got one session and on the bottom receiver, we've got two sessions. It's Layer 4, the Transport Layer, that is responsible for tracking and keeping control of the different sessions on our host.

Layer 4 Port Numbers

We have port numbers. In our example, we've got two sessions going from the sender on the left to the bottom receiver on the right. One of them is web traffic. The other session is email traffic. So when the traffic comes into the receiver, how does it know which application this traffic is for? Is it for its web server application, or is it for its email server application?

The way it knows is with the Layer 4 port numbers. For example, HTTP web traffic uses port 80. SMTP email uses port 25. The sender adds a source port number to the Layer 4 header as well. The combination of source and destination port numbers can be used to track sessions.

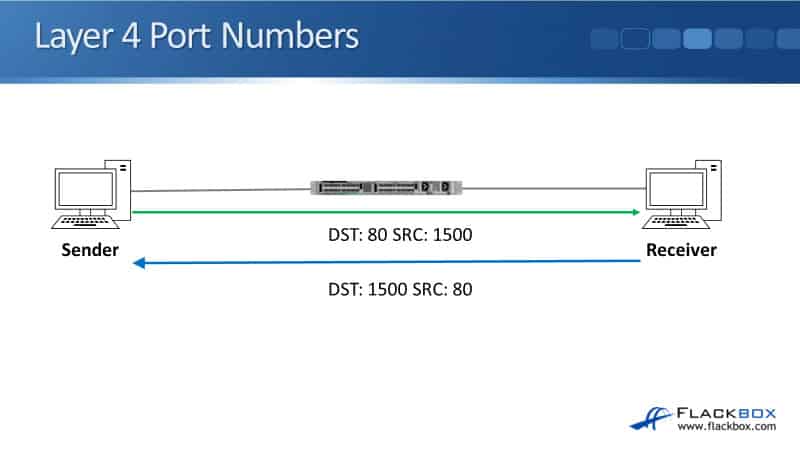

Here, we've just got one sender on the left, a receiver on the right, and we're sending web traffic. The sender sends it with a destination part of port 80, the standard port for web traffic, and it will use a random source port number above 1024.

In our example, it's using source port 1500. When the receiver sends traffic back, it will flip the source and destination port numbers around. So, it will use port 80 as its source now, and the destination will be port number 1500.

This is how stateful firewalls are able to keep track of connections as well. Imagine that rather than a switch in the middle there, we have a firewall and we have a rule in the firewall that said traffic is allowed out from the sender on the left out to the network on the right, but traffic is not allowed from the right to the left unless it was initiated from the sender.

In that case, on the firewall, we're allowing traffic from the sender to the receiver. That traffic is allowed outbound. When the return traffic comes back, the firewall could see, based on the source and destination port numbers, "Oh, this is return traffic going back to that sender again, so I'll allow this traffic to come through." If the traffic had been initiated by the host on the right, it would not allow that traffic.

TCP

Our two most common protocols at Layer 4 are TCP, which is the Transport Control Protocol, and UDP, which is the User Datagram Protocol. TCP is connection-oriented. Once a connection is established, data can be sent bidirectionally over the two hosts over that connection.

TCP carries out sequencing. It includes sequence numbers into traffic to ensure that segments are processed in the correct order and none are missing. When traffic comes into the receiver, it can look at the sequence number and it can use that to make sure it assembles the traffic in the correct order again. It can also check from the sequence numbers if a segment was lost in transit as well.

TCP is reliable, the receiving host sends acknowledgments back to the sender. Based on the sequence numbers, the receiver can see if all the traffic has come in. If any traffic is being lost in transit, then it will tell the sender that that happened.

The way it does it is by not sending an acknowledgment back to the sender. When a sender realizes that traffic has been lost, it will resend that traffic again. TCP can also perform flow control as well. So if a sender is sending a rate too high and the receiver can't handle it, the receiver can signal back to the sender, telling it to slow down.

The TCP Three-Way Handshake

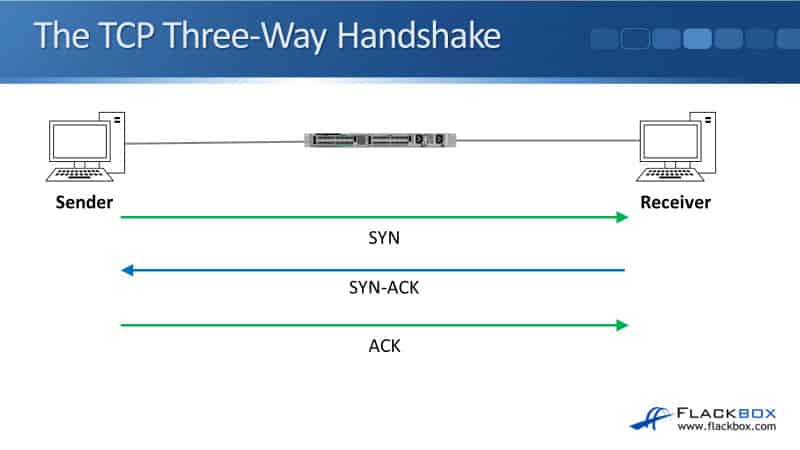

The way that that connection is set up between the two hosts is it uses the TCP three-way handshake. So here we've got the sender on the left, who's going to initiate the connection. It sends a SYN, a synchronized message, over to the receiver on the right.

When the receiver receives it, it will send a SYN-ACK back, so a synchronized acknowledgment. And then finally to complete the connection, the sender will send an acknowledgment, ACK. We now have the connection set up between the two hosts and we can send traffic over it.

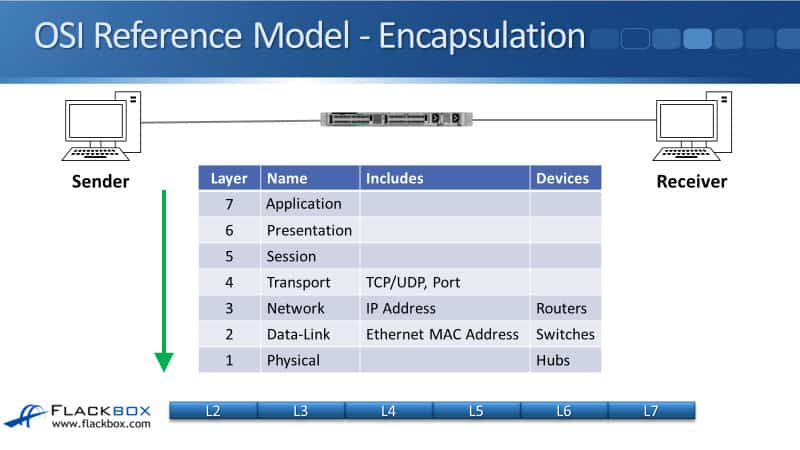

OSI Reference Model - Encapsulation

Here, we've got the sender on the left, the receiver on the right, and we're going to send some traffic over. First off, as the sender is composing the packet, it will put in the Layer 7 information. It will then encapsulate that with the Layer 6 header.

It then gets encapsulated with the Layer 5 header, then Layer 4 header, then Layer 3 header, Layer 2 header, and then we send it onto the physical wire at Layer 1.

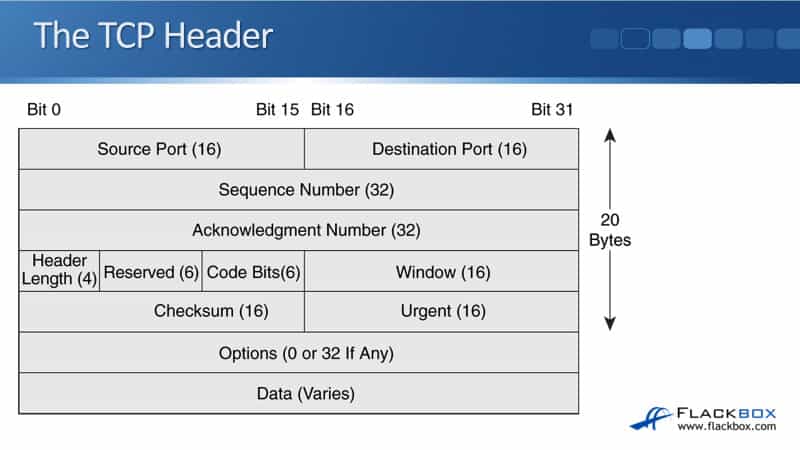

The TCP Header

We've got the source port and the destination port numbers as we spoke about earlier. We then have a sequence number and the acknowledgment number. We have a header length, a reserved field, which is for any reserved information later, code bits, window, which can be used for flow control.

We've also got a checksum, which can be used to check and see if the traffic got corrupted in transit. There's an optional urgent part of the header there as well. We can also add other options. Finally, we've got the data.

UDP

UDP is the User Datagram Protocol and it sends traffic best-effort, meaning we don't have the connection. We don't have reliability. The sender just makes up the packet, sends it over to the receiver, and hopes that it's going to get there. UDP is not connection-oriented. There's no handshake connection set out between the hosts.

It doesn't carry out sequencing to ensure segments are processed in the correct order or missing. It's not reliable. The receiving host does not send acknowledgments back to the sender and it does not perform flow control.

I just said that the sender will send the traffic and hope it gets there? We can still have error detection and recovery for this traffic, but if it is required, it's going to be up to the upper layers, higher up to the application level, to actually provide that. It's not going to be provided by UDP.

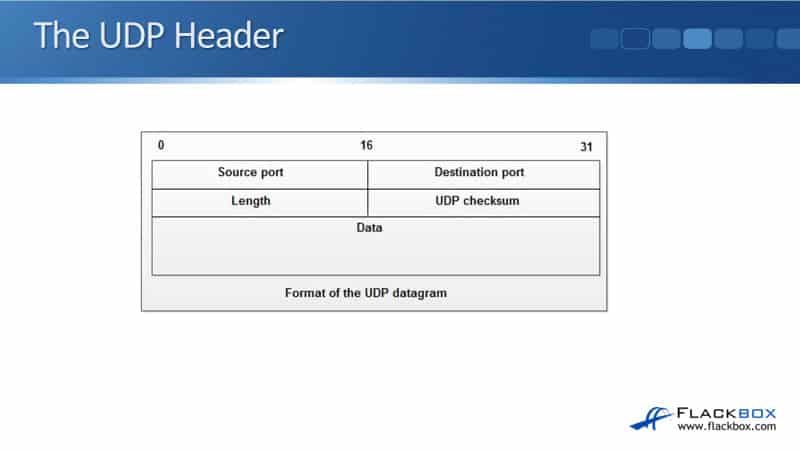

The UDP Header

Looking at the UDP header, you'll see there are much fewer fields in here. All we have is the source and destination port, the length, a UDP checksum, and the data. Comparing the UDP header and the TCP header, there's much less overhead with UDP, which leads us to where TCP or UDP would be used.

TCP vs UDP

Whenever a designer designs an application, they can choose whether it's going to use TCP or UDP for its transport. They will typically choose to use TCP for traffic which requires reliability, but real-time applications such as voice and video can't afford that extra overhead of TCP, so they would use UDP.

Voice and video, it's very sensitive to delay. You've probably watched TV before. You've seen a news report where the newscaster was doing it over a satellite phone, and you can see it's very laggy because satellites are famously high-latency connections.

We don't want to use TCP for real-time traffic like that because the extra overhead is going to slow it down and it's going to affect the quality. So for real-time traffic that's sensitive to delay, we'll usually use UDP. For other applications, we'll use TCP.

There are a lot more other applications than voice and video, so TCP is the most commonly used Layer 4 transport. There are some applications that can use both TCP and UDP as well.

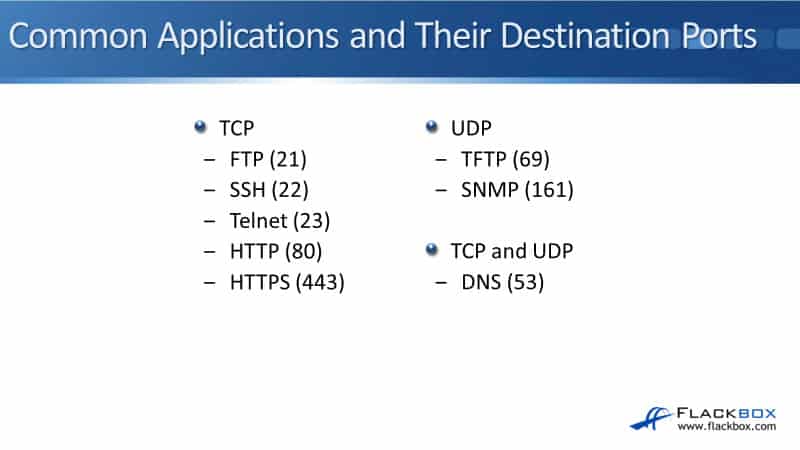

Common Applications and Their Destination Ports

For applications that use TCP, we've got FTP, the File Transfer Protocol and it uses port 21. Secure Shell is on port 22. Telnet, port 23, HTTP web traffic is on port 80, and HTTPS encrypted web traffic is on port 443.

For UDP protocols, we've got TFTP, the Trivial File Transfer Protocol, which uses port 69. SNMP, the Simple Network Management Protocol, uses port 161. The best-known application that can use both TCP and UDP is DNS on port 53. There are some other voice and video signaling protocols that can also use both TCP and UDP as well.

Additional Resources

Layer 4 Transport Layer: https://osi-model.com/transport-layer/

Understanding The TCP/IP Transport Layer: https://www.learncisco.net/courses/icnd-1/building-a-network/tcpip-transport-layer.html

OSI Model Reference Chart: https://learningnetwork.cisco.com/s/article/osi-model-reference-chart

Libby Teofilo

Text by Libby Teofilo, Technical Writer at www.flackbox.com

Libby’s passion for technology drives her to constantly learn and share her insights. When she’s not immersed in the tech world, she’s either lost in a good book with a cup of coffee or out exploring on her next adventure. Always curious, always inspired.