In this NetApp training tutorial, you’ll learn about NVMe over Fabrics (NVMe-oF) in ONTAP. Compared to the other storage protocols, NVMe is very new. You can check out the tutorial on it in my Intro to SAN and NAS course. Scroll down for the video and also text tutorial.

NetApp NVMe-oF Video Tutorial

Matthew Roberts

I have been watching your videos for the past year now and last week I took and passed my NCDA exam. I just wanted to drop you a quick email to thank you. Your videos were a tremendous help!

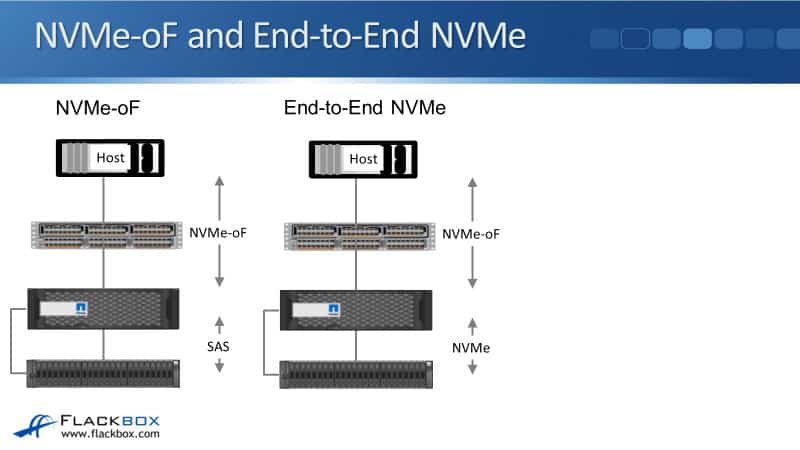

NVMe-oF and End-to-End NVMe

First, let's talk about the difference between NVMe-oF and end-to-end NVMe. In the example diagram below, we've got the host and then a network infrastructure connecting that to the storage. We're using NVMe over Fabrics as the protocol between the host and the storage system over the network.

On the left, once the traffic hits the storage system, getting from the storage system to the disks is using traditional SAS. It needs to be an AFF system running ONTAP 9.4 or later.

The other thing we can do in ONTAP is end-to-end NVMe. With end-to-end NVMe, we're using NVMe-oF over the network from the host of a storage system. In this case, the disk subsystem is also using NVMe and that gives you the best performance with end-to-end NVMe.

End-to-end NVMe is supported in ONTAP as well, but you need to have a controller platform that does support NVMe disks. Right now, as I'm recording this, that's just the AFF A800, but in the future, I expect that ONTAP will be rolling that out across the rest of the platforms.

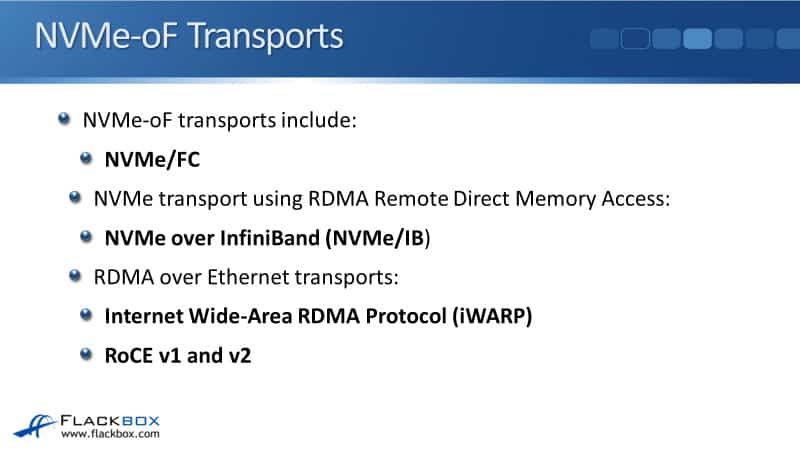

NVMe-oF Transports

NVMe-oF is the transport between the host and the storage system. There are different options that you can use for the network infrastructure for this such as:

- NVMe over Fiber Channel (NVMe/FC)

- NVMe transport over Remote Direct Memory Access (RDMA)

- NVMe over InfiniBand

- RDMA over Ethernet transports

- iWARP

- RoCE v1 and v2

The main difference between RoCE v1 and v2 is that in RoCE v1, the host and the storage system need to be on the same IP subnet. With v2, they can be in different IP subnets because RoCE v2 is routable, unlike in v1.

Those are all of the different transports that are available, in theory, across the industry as a whole, but they're not all supported in ONTAP.

NVMe/FC

Now, let's look at what is supported in ONTAP and that is NVMe over Fiber Channel (NVMe/FC). The big benefit of NVMe over Fiber Channel and the reason that this is the first transport that ONTAP is supporting.

It can be used over a standard Fiber Channel infrastructure which, if you're an ONTAP customer looking to move towards NVMe, there's a good chance that you already do have an existing Fiber Channel infrastructure in place.

When you use NVMe/FC, you can use the existing HBAs, switches, zones, targets and cabling. If you've already got an existing Fiber Channel infrastructure, you can use that for NVMe/FC without having to buy any additional hardware.

For the other transfer options, that is not the case, you do need specialist hardware for those. So, because NVMe over FC can use that existing Fiber Channel infrastructure, that makes it the transport most likely to enable the easiest transition and the least expensive transition for companies. That's why ONTAP went with it.

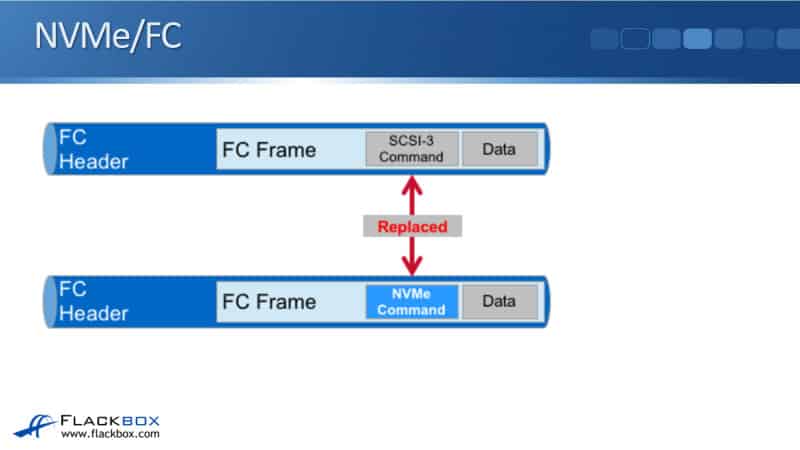

You can see here why you can use your existing Fiber Channel infrastructure. The actual Fiber Channel frame is very similar between the traditional Fiber Channel and NVMe/FC.

You can see that with both of them, we've got the FC header, the frame, and the data. The difference is that traditional Fiber Channel is using the old SCSI-3 command set. With NVMe over FC, it's using the NVMe commands.

You might look at this and think, "Well, what's the big deal? Is that going to make much difference?" Well, yes, it will. Because the SCSI command set has been around for decades. It's very old and it was designed for the technologies that were available back in the day.

NVMe has been designed to work with modern high-speed technologies and SSD disks. It's much more efficient and high performance. So, you will see performance improvements by using NVMe/FC over the traditional Fiber Channel or the other storage protocols.

NVMe/FC ONTAP Version Support

As I said, NVMe/FC is the first transport supported in ONTAP. That has been available since ONTAP 9.4 on AFF systems. There was a pretty big drawback with this in ONTAP 9.4 and that was that high availability support was not supported therefore, you had no redundancy.

That limitation was overcome in ONTAP version 9.5 which makes it a much more feasible option to use. However, there's still not a huge uptake of this because there's currently limited support in the client opening systems.

Obviously, if your client's operating system doesn't support it, then you're not going to be able to use it. But just like I said, that I'm expecting NVMe to have wider support across the ONTAP platforms in the future, also the different client operating systems, you should see much more widespread support in them as well as time goes on.

NVMe/FC LIFs

Some details about how NVMe/FC works in ONTAP. NVMe/FC and traditional use separate LIFs, but the LIFs can be homed on the same physical port. This is another thing that makes the transition a lot easier.

If you are using Fiber Channel currently and you want to migrate using NVMe/FC, you can use your existing Fiber Channel infrastructure, and you can also use the same ports concurrently as well.

You can still be using Fiber Channel in the same physical ports and then enable them for NVMe/FC. It makes it very easy to do the transition. Only one LIF per SVM is supported in ONTAP 9.4. Again, ONTAP 9.4 did not support redundancy.

In ONTAP 9.5, you must configure at least one NVMe LIF for each node and an HA pair that uses NVMe, and you can create up to two NVMe LIFs per node if you needed that extra bandwidth. So, redundancy is supported in ONTAP 9.5.

Additional Resources

NetApp AFF A800 NVMeOF Review: https://www.storagereview.com/review/netapp-aff-a800-nvmeof-review

When You’re Implementing NVMe Over Fabrics, the Fabric Really Matters: https://blog.netapp.com/nvme-over-fabric/

Libby Teofilo

Text by Libby Teofilo, Technical Writer at www.flackbox.com

Libby’s passion for technology drives her to constantly learn and share her insights. When she’s not immersed in the tech world, she’s either lost in a good book with a cup of coffee or out exploring on her next adventure. Always curious, always inspired.