In this Cisco CCNA training tutorial, I’m going to give you an overview of Quality of Service (QoS). When people talk about QoS in general, they’re usually referring to queuing. Scroll down for the video and text tutorial.

Cisco QoS Overview Video Tutorial

Tanvi Gogri

I really would like to thank you for your course and guidance for CCNA certification. Your course has helped me clear the certification exam. Thank you so much for being a great mentor! Keep inspiring!

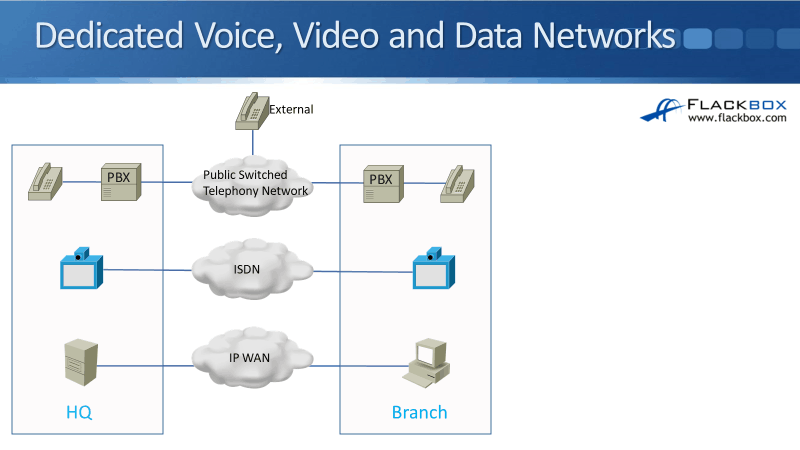

Dedicated Voice, Video, and Data Networks

The original driver for QoS was voiceover IP. The reason why there was a need for quality of service is that back in the day (and some networks today as well), there was a dedicated voice, video, and data networks.

Some companies might not have video, but for sure they would have data and voice.

- For their data network, they would have a standard IP WAN.

- For their phone network, they would be connected to the local telephone provider. They would have phones in their desks, in their offices, and there would usually be a PBX connected up to the telephone company in the office which is used to control the phones.

- For video calls, if they had video endpoints, that would be typically connected up over an Integrated Services Digital Network (ISDN) provided by the phone company.

In the diagram above, the three networks for voice, video, and data are completely and physically separate from each other:

- The data network is dedicated for the data

- The ISDN network is dedicated for video

- The phone network, the public switched telephony network with the telco, is dedicated for voice calls

If there's a problem with one of the networks, it's not going to affect the other two.

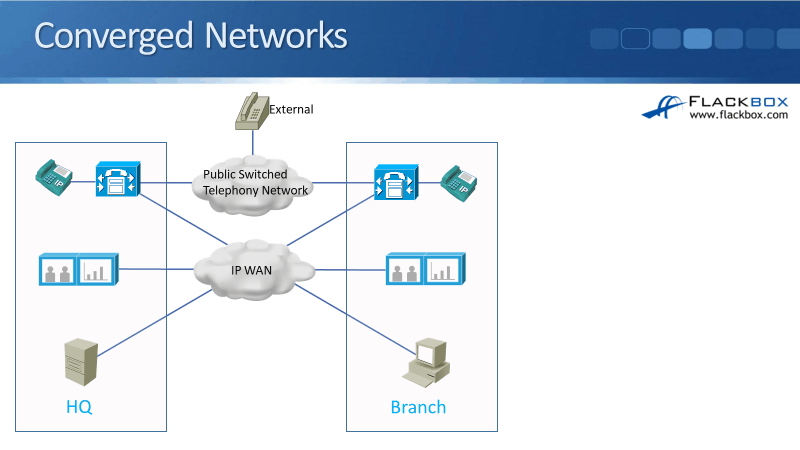

Converged Networks

Now, what you'll find most often in modern networks are converged networks. The company is running voice, video, and data all over the same underlying physical network infrastructure.

In the diagram below, we've got IP phones on the desks and they're going to be connected to an IP PBX, like the Cisco Unified Communications Manager.

We've got IP video endpoints as well, and we've got our standard IP servers and workstations. Everything is connected to the same underlying network which is running over an IP infrastructure.

In the dedicated networks, we were able to call external people like customers and suppliers through the phone company. In converged networks, everything is on the company's corporate IP WAN, therefore, they can send data, voice, and video, and make phone calls between offices.

But to be able to call customers or suppliers, we're going to still need to be connected to the local phone company as well.

For that connection, it could be using:

- Traditional phone connection - voice E1 or T1 connection

- Modern phone providers - over IP, using a Session Initiated Protocol (SIP)connection

What’s important here is that the voice, the video, and the data is all running over that same shared network infrastructure between the different offices.

Traditional vs Converged Networks

On old traditional networks, data, voice, and video had their own physically separate network infrastructure, and they did not impact each other. If there's a problem with the data network, it is not going to affect the voice network. People can still make phone calls normally.

On modern networks, data, voice, and video run over the same shared physical infrastructure. The reason that companies do that now is that it enables cost savings. Rather than having three separate networks, you can run everything over the same shared network. It enables advanced features for voice and video as well.

For example, with modern video endpoints, they can integrate with other collaboration software. They can be utilized in shared presentations over WebEx and the network. It can also integrate with call centers, etc.

It gives lowered costs and increased features, but a potential problem with this is that data, voice, and video are all fighting for the same shared bandwidth on the same shared physical network.

Quality Requirements for Video and Voice

Voice and video have quality requirements. For voice and traditional standard definition video packets, the recommended requirements to be called as an acceptable quality call are:

- Latency or delay - up to 150 milliseconds

- Jitter or variation in delay - no more than 30 milliseconds

- Packet loss - no more than 1%

Those are one-way requirements, meaning, a packet sent from a phone in the HQ has 150 milliseconds to reach the phone in the branch, and vice versa.

In the converged network diagram, we've got our phones in the HQ. If I'm going to make a phone call in the HQ to the phone in the branch, the packets coming from the phone in the HQ are where your spoken voice is carried inside.

The packets have 150 milliseconds to make it to the phone in the branch. Also, the jitter should be no more than 30 milliseconds. Jitter is variation in delay, for example, we've got multiple packets going from the HQ phone to the branch phone. The delay between the first or second and the third packet varies, that is your jitter.

Your IP phones have got a built-in jitter buffer. They don't immediately play the packets out to your ear because there's always going to be some jitter there. It would sound jittery if they did that, so the IP phones will smooth out the rate of the packets being received to make it sound natural.

If the jitter goes above 30 milliseconds, it's going to overrun that built-in jitter buffer, and it's going to make it a bad quality call.

You've all seen bad quality calls. If you watch a news report coming from a war zone or somewhere like that, usually, they'll be using a satellite phone. Satellite is famously a highly ANSI connection, that's why when you see the news report, they always make the apology at the start about the quality of the call.

If you don't meet those requirements for your voice and your video, it will be:

- Choppy

- Bad Quality

So, that was for standard IP telephony voice and standard definition video. If you're using high-definition video, it's got stricter requirements where it must handle less loss. High-definition video uses very high compression. So if you lose any pockets at all, it will be noticeable in the video.

FIFO First In First Out

The default queuing mechanism on a router and switches as well is first-in, first-out. Whenever congestion is experienced on a router or a switch, packets are sent out in a first-in, first-out (FIFO), by default.

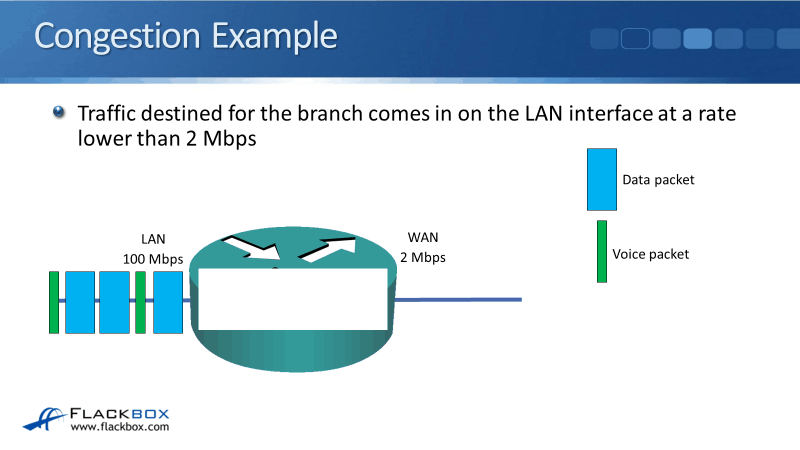

Congestion Example

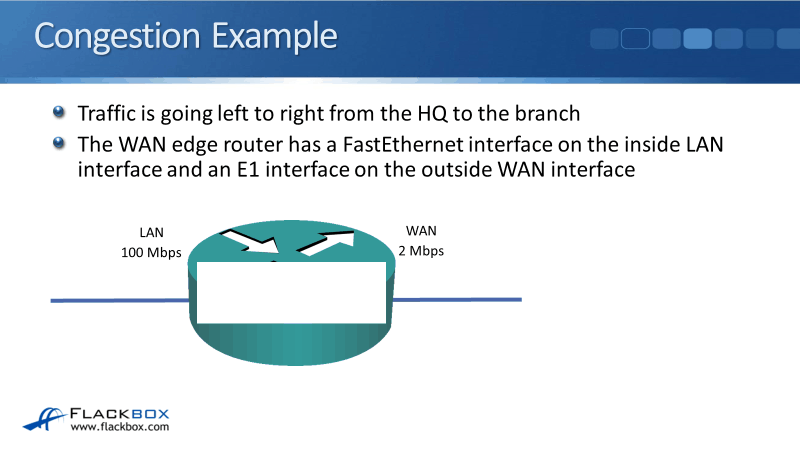

Congestion can be experienced whenever packets can be coming in quicker than they can be sent out. An example would be, on your WAN edge router, you've got a fast interface on the inside and a slower interface on the outside.

In our example above, the router has a FastEthernet on the inside with 100 megabits per second and the outside interface was an E1 with the speed of 2 megabits per second.

Traffic can be coming at a rate of up to 100 Mbps, but the router can only physically send traffic out at a rate of up 2 Mbps. So if traffic is coming in at a rate higher than 2 Mbps, then the router can't send out as quickly as it comes in. Therefore, it's going to queue those packets up.

You would typically see congestion on:

- WAN Edge Routers: They have faster speed interfaces on the inside than on the outside

- Campus Switches: There are more workstations connected in the access layer than the uplinks going up to the top. The campus LAN will usually have lesser congestion due to the availability of high-speed interfaces.

We’re going to use WAN edge router as an example because that's where we're going to see the main effective congestion and where QoS can help the most as well.

We have the HQ on the left and the branch office on the right. The HQ router will be used to send traffic from left to right, to the branch.

Let's look at what happens when we don't have congestion. We've got traffic coming in on the inside interface on that router, which is going over to our external offices. Because we're running a converged network, we've got both voice and video and data packets are going to be coming in.

- Data Packets - Blue and Big

- Voice Packets – Green and Small

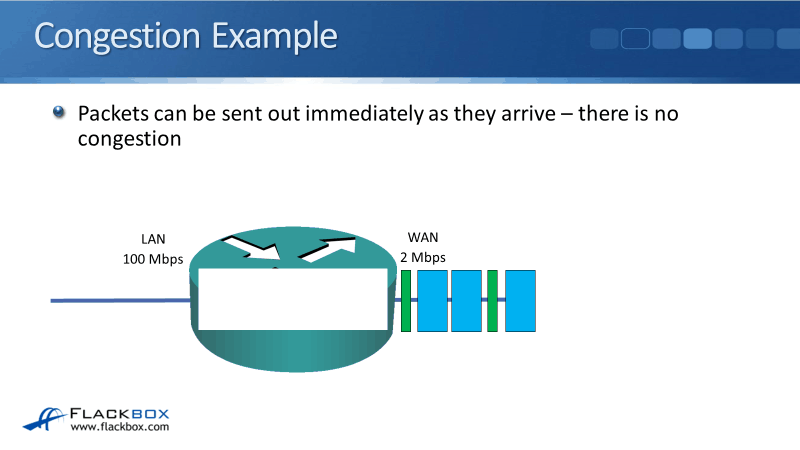

In our example, the traffic is coming in at a rate of fewer than 2 Mbps. The office isn't very busy, therefore, the router can send traffic out immediately as it is received. In that case, there is no congestion at all.

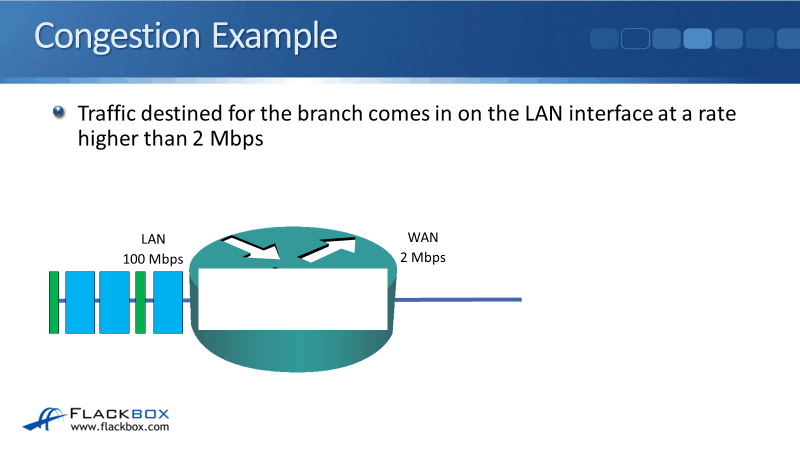

Traffic is passing very quickly through the router. We're not going to have any problems there but, we get a problem when traffic comes at a rate higher than 2 Mbps.

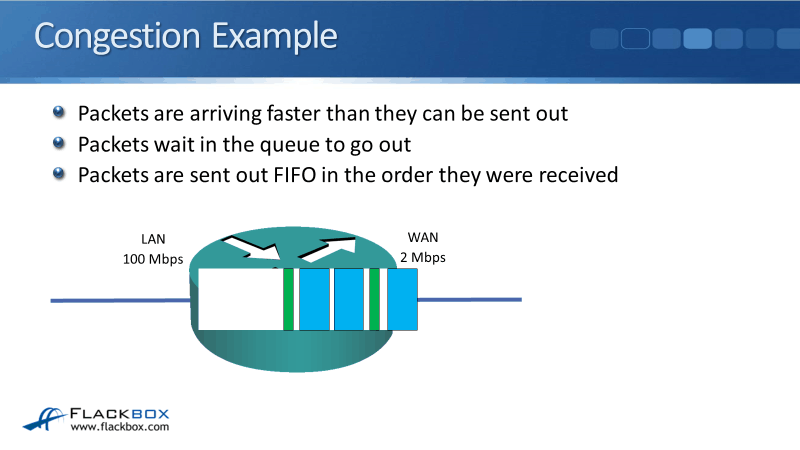

In the example below, we've got that larger data pocket at the front, the blue one. A little green voice packet behind, then a couple of data packets, and then we've got a green voice packet coming in again.

What order do our packets going to come in? It depends on what people are doing.

- If somebody's making a phone call - voice packets are going to come in

- If somebody’s sending data - data packets are going to come in

Packets are going to come in whatever order the users are taking those actions. Since the traffic is coming in at a rate faster than 2 Mbps, the router can't keep up and can’t send packets out quickly enough.

When that happens, the router will buffer traffic, and the packets will wait in the queue to go out. The default queuing mechanism is that traffic gets sent out in the same order that it comes in. It's first-in, first-out.

Effects of Congestion

Whenever you've got packets being queued up, that is congestion. It causes:

- Delay to the packets that are waiting in the queue.

- Jitter due to the variation in queue size.

- Large Queue: Longer Time

- Short Queue: Lesser Time

- Loss, when the queue is full, the router is going to drop the packets that try to get in at the back.

Our applications and voice and video calls will be of unacceptable quality if they do not meet their delay jitter and lost requirements.

Having queues in the router is going to cause our voice and video packets to not meet their needed requirements, therefore, causing bad quality calls.

When you're working in IT, this is going to give you a big issue. Back in the day when voice and video went on their separate dedicated networks, users are used to picking up the phone and making phone calls which are always in good quality. Hence, you have to provide the maximum quality of modern networks as well.

How to Mitigate Congestion

So how can we mitigate congestion? The easiest way is we can add more bandwidth. If we had a 100 Mbps interface on the outside as well as on the inside, then whenever traffic comes in, we can send it out immediately.

The problem is that it costs money. The outside interface is connected through your service provider, and the more bandwidth you want, the more money they're going to charge you for it.

Another way that we can help mitigate congestion is by using quality of service techniques. Quality of service gives better service to the traffic that needs it.

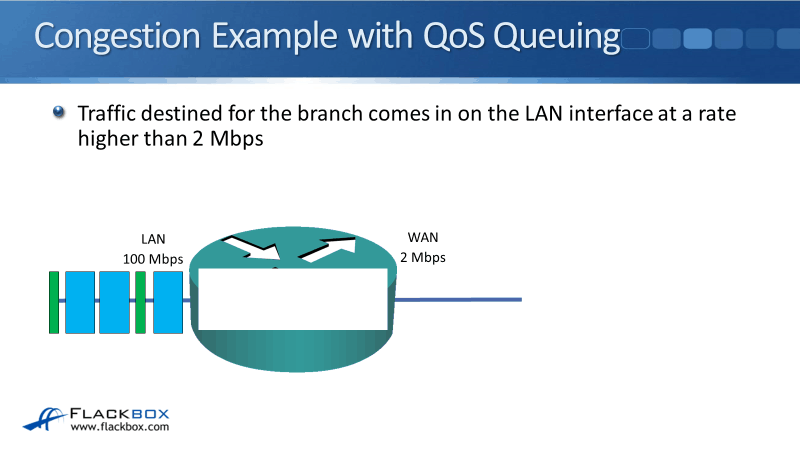

Congestion Example with QoS Queueing

So what we're going to do now is we're going to configure queuing on our router, and we're going to give better service to our voice packets.

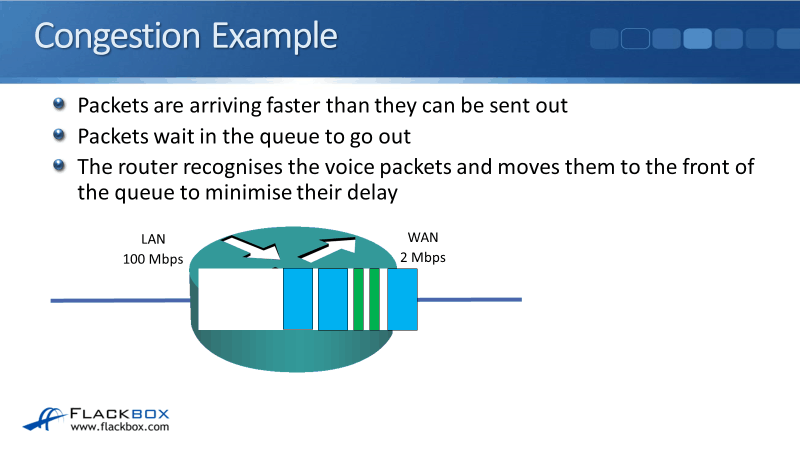

It's the same scenario where we've got traffic coming in at a rate higher than 2 Mbps. The data packet comes in first, then a little voice packet, then two data packets, and then a voice packet.

The traffic goes in the queue, but the difference is we’re going to put our voice packets straight in the front of the queue whenever there is a queue in the router.

The voice packets jump in front of the data packets. The router recognizes voice packets, and it moves them to the front of the queue, thus, minimizing the delay.

The voice packets are now in front so, they're going to be in the queue for a lesser time and they're going to get out of the router quicker.

Effects of QoS Queueing

So what are the effects of doing this? For particular traffic, it reduces the following:

- Latency

- Jitter

- Loss

You're also going to give better service to your voice and video traffic and maybe some mission-critical applications.

The original driver for QoS was VOIP, but it can be used to give better service to your important data applications as well. The thing is, if you're giving one type of traffic better service on the same link, the same bandwidth you had before, then the other traffic types must get worse service.

Our voice packet will be moved in the front of the queue, but our data packets will be moved further back. The voice is going to get better service, but data gets worse. The point is to give each type of traffic the service it requires.

If a user is trying to open up a webpage on the internet and it takes one second rather than half a second, the user's not going to notice the difference.

But if you're on a phone call, and somebody's voice starts getting jerky, there are gaps in it, and you can't understand it, then that's a big deal. It means that the phone call doesn't work.

It’s important to give voice and video really good service so it gets the quality it requires. It doesn't matter if data gets a little less quality service because users won't notice it anyway.

QoS is not a magic bullet, and it's designed to mitigate temporary periods of congestion. If a link is permanently congested, then you're going to have a bad quality voice, video, and applications on that link. So what you need to do is upgrade the link.

What you'll often see that companies do is they have a target utilization. They'll look for an average utilization of 80% on the link, but you know that the network gets busier at some times of the day.

Monday at 9:00 AM is probably busier than it is at 3:00 PM on a Friday. You could put in enough bandwidth that the link never gets congested, but that would be expensive. So what you can do is balance the cost by having the link running at, for example, 80% utilization on average.

It will sometimes burst up to 100% and the link will be congested. For those temporary periods of congestion, you can enable QoS so your voice, your video, and data applications get the service that they require.

Additional Resources

Introduction to QoS (Quality of Service): https://networklessons.com/cisco/ccna-routing-switching-icnd2-200-105/introduction-qos-quality-service

QoS Design Principles and Best Practices: https://www.ciscopress.com/articles/article.asp?p=2756478

Libby Teofilo

Text by Libby Teofilo, Technical Writer at www.flackbox.com

Libby’s passion for technology drives her to constantly learn and share her insights. When she’s not immersed in the tech world, she’s either lost in a good book with a cup of coffee or out exploring on her next adventure. Always curious, always inspired.